Research News

Protected Bike Lanes Causally Increase NYC Bikeshare Ridership, But Benefits Are Not Distributed Equally

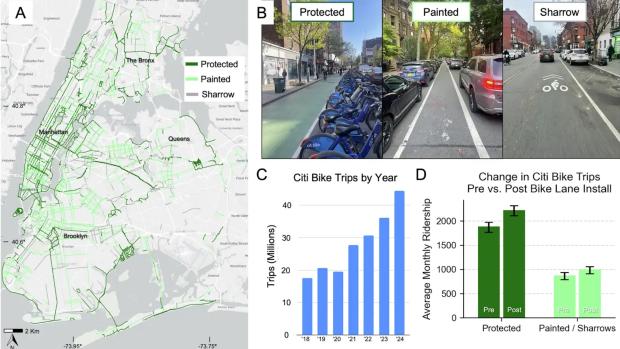

Protected bike lanes increase Citi Bike ridership in New York City, but painted bike lanes and sharrows do not show a statistically significant causal effect on ridership after accounting for confounding factors, according to a new study from researchers at NYU's Tandon School of Engineering published this week in npj Sustainable Mobility and Transport.

The findings address a longstanding question in transportation planning: if and to what extent do different types of bicycle infrastructure actually encourage more people to ride.

Protected bike lanes physically separate cyclists from vehicle traffic using barriers such as curbs, parked cars, or flexible posts. Painted bike lanes provide only a painted stripe between cyclists and cars, while sharrows are bicycle symbols painted onto shared traffic lanes.

Using approximately 72 million Citi Bike trips recorded between 2013 and 2024 (a period of significant ridership growth), the researchers linked trip data to bicycle infrastructure located near stations across New York City.

Initial results suggested that both protected and painted facilities were associated with increased ridership. Stations near newly-installed protected bike lanes saw an average increase in trips of 18%, while stations near painted bike lanes and sharrows experienced an average increase of about 14%.

However, those initial before-and-after comparisons do not account for the fact that bike lanes are often installed in areas where cycling activity is already increasing.To isolate the effects of the infrastructure itself, the researchers used propensity score matching and difference-in-differences analysis, statistical methods designed to compare similar locations while controlling for pre-existing neighborhood characteristics and ridership trends.

After applying those methods, only protected bike lanes showed a statistically significant causal effect on Citi Bike ridership. The researchers estimated an average increase of approximately 379 additional rides per station per month following installation of protected lanes. In contrast, painted bike lanes and sharrows did not show a statistically significant causal effect on ridership.

"Not all bike lanes are created equal," said Takahiro Yabe, Assistant Professor in the Department of Technology Management and Innovation (TMI) and the Center for Urban Science + Progress (CUSP) at NYU Tandon School of Engineering. "When cities invest in cycling infrastructure, the design details can determine whether a lane simply exists on a map or actually changes how people travel. That matters for transportation, public health, and sustainability, especially when cities are making difficult choices about how to invest limited resources."

"Painted bike lanes and sharrows may cost less and face less political pushback, but we now have evidence at a massive scale that protected bike lanes are really what can move the needle on ridership," said Marcel Moran, the lead author of the paper. Moran is currently an Assistant Professor at San José State University, and was a Faculty Fellow at CUSP during this project.

The study also examined whether the effects of protected bike lanes differed across neighborhoods. The researchers found that the positive ridership effect was statistically significant only in Census block groups with the lowest share of Black residents. In neighborhoods with higher shares of Black residents, they did not detect a statistically significant causal effect on Citi Bike ridership.

"Protected bike lanes seem to work best where cycling was already a realistic option for people,” said Malik Salman, a paper co-author. Salman is an NYU CUSP alumni and currently a CUSP Research Scholar in Yabe’s lab. “In communities where residents face other barriers — cost, discriminatory policing, a history of being left out of the planning process — the infrastructure alone may not be enough to change behavior. That's not an argument against building protected lanes. It's an argument for doing more alongside them."

The results were more encouraging for older adults. In Census block groups with the highest share of residents between ages 60 and 79, protected bike lanes produced particularly strong ridership gains. The researchers suggest that older adults may be especially responsive to infrastructure that reduces perceived traffic-safety risks. This aligns with evidence from cities like Copenhagen, which feature an expansive network of protected bike lanes, as well as high ridership among older adults.

The study comes as New York City's bicycle network has expanded from roughly 900 miles of bike lanes in 2014 to approximately 1,500 miles by 2024, while Citi Bike recorded a system-high of roughly 45 million trips in 2024. The authors say their methodology could be applied to other cities with publicly available bikeshare and bike-lane data, including Chicago, Boston, San Francisco, and Washington, D.C.

Moran, M., Salman, M. & Yabe, T. Heterogeneous impacts of protected bike lanes on bikeshare behavior across demographic groups in New York. npj. Sustain. Mobil. Transp. 3, 39 (2026). https://doi.org/10.1038/s44333-026-00107-2

A 3-D Printed Stent, Shaped Like a Lily, Could Speed Recovery After Weight-Loss Surgery

Each year, about 250,000 Americans undergo sleeve gastrectomy, one of the most common weight-loss operations in the United States. For most patients, recovery is uneventful.

But for a small share — between one and three percent in routine cases, and as many as one in ten in revision surgeries — the procedure can leave behind a gastric leak, in which fluid escapes from the stomach and forms an abscess.

Treating those leaks can be a long process. Doctors usually rely on endoscopic internal drainage, threading a small plastic tube called a double-pigtail stent through the stomach wall so the fluid can drain. But the devices typically used are built for bile ducts, not for the oddly shaped cavities created by gastric leaks.

That mismatch matters. The stents can slip, drain slowly and require repeated procedures before the leak resolves.

Now, researchers at New York University say they have found a better approach by changing the shape of the stent itself. Their findings have been published in Advanced Healthcare Materials.

Their prototype, called the Lily stent, is the first product of a design framework the researchers named PETALS, for Personalized Endoscopic Transmural Abscess Leak Solution, a mathematical approach to optimizing drain shape for complex biological fluids that the team says could be applied beyond gastric leaks to other drainage challenges in the body.

Using computer simulations and mathematical modeling, the researchers found that length and inner diameter mattered most, while the curled anchoring ends had little effect on fluid flow. Counterintuitively, a wider tube does not drain better. Increasing the inner diameter shrinks the gap around the outside of the stent, where most of the fluid actually travels. That means exterior topography, not interior volume, is the primary driver of drainage performance.

That insight is the foundation of the PETALS framework. By mathematically optimizing the outer surface geometry for the viscosity and pressure of gastric fluid, the team arrived at their new stent, a six-part structure that creates more effective routes for fluid to move around the device.

“The key insight is that the geometry of the tube’s cross-section, especially the exterior surface, fundamentally determines how fast fluid moves through and around it,” said Khalil Ramadi, an assistant professor at NYU Abu Dhabi and NYU Tandon School of Engineering and the study’s senior author. “We’re not just making it out of a different material. We’re changing the shape to make it work better.”

If the results hold up beyond the lab, the payoff could be meaningful. Roughly 2,500 people in the United States require treatment each year for gastric leaks after bariatric surgery. Faster drainage could shorten recovery and reduce the need for repeat procedures, easing both the burden on patients and the cost of care.

The device is still early in development. So far it has been tested only in simulations and benchtop models. Animal studies will be needed before it can move closer to clinical use.

The Lily stent also proved more flexible than the commercial polyethylene device it is meant to replace, an attribute surgeons associate with better patient tolerance and reduced tissue damage. In short-term animal studies, tissue surrounding the implanted material showed no significant difference from tissue around standard polyethylene, an early indicator of biocompatibility.

The researchers note that the Lily design's constant cross-section geometry would allow it to be manufactured by conventional extrusion methods, without requiring hospitals to invest in 3-D printing infrastructure.

"Our work shifts the focus from just placing a stent to engineering its function at a structural level," said Parima Phowarasoontorn, a research assistant in Ramadi’s NYU Abu Dhabi lab and the paper’s first author. "Instead of simple tubes, we introduce cross-sectional designs that improve drainage while remaining compatible with existing endoscopic delivery procedures."

The Lily stent research follows Ramadi's lab's announcement of the CORAL capsule, an ingestible pill whose coral-like structure traps bacteria from the small intestine, enabling researchers to study microbial communities that contribute to certain diseases. Both devices draw on nature's own geometry to solve medical problems that conventional tools have struggled to address.

Phowarasoontorn P, Ko Y, Barajas-Gamboa JS, Pantoja JP, Al-Ketan O, Ali M, Sohn S, Naser HT, Dabbour AH, Khlaifat B, AlZubaidi A, Vega CA, Rodriguez J, Kroh M, Ramadi KB. Enhanced Endoscopic Internal Drainage of Gastric Abscess Through Additively Manufactured Stents. Adv Healthc Mater. 2026 Apr 2:e05860. doi: 10.1002/adhm.202505860.

New Research Suggests Consistency, Not Complexity, Is the Key to Teaching Robots Dexterity

Teaching robots to manipulate objects with humanlike dexterity has long been one of robotics’ toughest challenges. Tasks such as rotating an object in-hand or coordinating two robot arms to maneuver a bulky item require constant changes in contact, grip and motion, skills that are difficult both to program and to demonstrate through human teleoperation.

Now researchers from NYU Tandon and the Robotics and AI Institute have shown that robots may be able to learn these behaviors from planning algorithms instead of human demonstrations. Their study, published in IEEE Robotics and Automation Letters (RA-L), suggests that the quality of synthetic training data matters more than researchers previously realized. The paper was recently awarded the IEEE RA-L Best Paper Award.

Modern robot-learning systems often rely on imitation learning, in which robots copy demonstrations collected from humans controlling robotic hardware remotely. But teleoperation systems are poorly suited for highly dexterous tasks involving many simultaneous contact points and finger movements. To bypass that limitation, the researchers used motion-planning algorithms to automatically generate demonstrations inside physics simulations. The idea was to let robots learn from virtual experience rather than from people. But the team discovered a problem: popular planning systems known as rapidly exploring random trees, or RRTs, produce demonstrations that are too inconsistent.

“These planners are very good at finding solutions,” says lead author Huaijiang Zhu. “But when every solution looks different, the learning system struggles to figure out what behavior it should imitate.”

The team found that the planners’ randomness created what researchers call “high-entropy” data — demonstrations that solved the same task through wildly different motions. Although this diversity helps planners explore possible solutions, it makes imitation learning less effective.

To address the issue, the researchers developed alternative planning approaches that generated more consistent demonstrations. One method emphasized steady progress toward a goal rather than random exploration, while another reused a library of predefined motions to reduce variability.

The researchers tested the approach on two difficult manipulation problems. In one task, two robot arms had to rotate a large cylinder by 180 degrees while repeatedly changing their grips. In another, a dexterous robotic hand manipulated a cube in its palm to match target orientations. They found that robots trained on the more consistent demonstrations achieved much higher success rates than those trained on standard RRT-generated data, even when using relatively small datasets. In the dual-arm task, the improved system reached near-perfect performance with only 100 demonstrations.

The team also transferred the learned policies directly from simulation to real-world hardware without additional retraining. The dual-arm robot succeeded in 90 percent of physical trials, while the dexterous hand completed about 62 percent of its attempts.

The study highlights a growing shift in robotics research. Rather than treating classical motion planning and machine learning as separate approaches, scientists are increasingly combining them. In this case, planning algorithms effectively served as teachers for neural-network-based robot policies.

The findings also reinforce a broader lesson emerging across AI: more data is not always better. Carefully structured, consistent examples may teach machines more effectively than large quantities of noisy or highly variable demonstrations.

Challenges remain, particularly for tasks involving deformable objects or soft robotic hands that are difficult to simulate accurately. But the work suggests a future in which robots learn increasingly sophisticated physical skills from virtual environments designed not just to produce solutions, but to produce solutions machines can understand.

H. Zhu et al., "Should We Learn Contact-Rich Manipulation Policies From Sampling-Based Planners?," in IEEE Robotics and Automation Letters, vol. 10, no. 6, pp. 6248-6255, June 2025, doi: 10.1109/LRA.2025.3564701.

NYU Tandon and NEC Put a Price Tag on Flood Protection in the Rockaways

In the Rockaways, a peninsula neighborhood in Queens, New York City, decisions about where to invest in flood barriers, beach nourishment, or shoreline reinforcement could soon be backed by something that has long been missing from the conversation. A clear accounting of what those investments are actually worth.

A research project from NYU Tandon School of Engineering's Center for Urban Science and Progress (CUSP), developed in partnership with NEC Corporation, used geospatial analysis and economic modeling to do exactly that.

But the more disruptive question the project raises is not just what flood resilience is worth, but who is receiving that value, and why they are not yet part of the funding conversation.

Developed through CUSP's Capstone Program, a two-semester initiative in which students work with external partners to address real-world urban challenges, the project was presented on May 1 at the Urban Data Science Showcase at NYU Tandon’s Brooklyn campus.

NEC and NYU have subsequently signed a Memorandum of Understanding (MOU) committing both organizations to further studies in urban disaster prevention, resilience, and advanced technology.

At the core of the project is a value chain framework that maps how a single flood protection investment generates ripple effects across transportation, housing, public health, and community wellbeing. Rather than treating these benefits as abstract, the team built five quantitative models that convert avoided damages into dollar figures.

"The central insight here is that flood resilience creates enormous economic value for stakeholders who have never been asked to contribute to its cost — transit operators, insurers, healthcare systems, tourism economies,” said the project’s Faculty Mentor Yuki Miura, assistant professor at CUSP and Tandon’s Department of Mechanical and Aerospace Engineering, and a member of the New York City Panel on Climate Change. “These are not passive bystanders to flood risk. They are material beneficiaries. Quantifying that stake is the first step to bringing them into the investment conversation."

The numbers are striking. Avoiding traffic disruption from roadway flooding amounts to an estimated $105 million over ten years. Mental health costs linked to flood-triggered trauma, drawing on post-Sandy PTSD rates three times the national average among Rockaway Peninsula residents, reach $501 million per major flood event.

Emergency shelter costs for the 15,932 public housing residents living in the flood zone approach $16.5 million per event. Protecting beachfront tourism preserves an estimated $170 million in revenue over a decade. A fifth model accounts for the health burden that construction noise places on the roughly 44,000 residents living near active worksites.

Taken together, the analysis suggests losses of up to approximately $800 million could potentially be avoided through flood protection measures currently underway under the Greater Rockaway Resilience Plan.

The models were built using ArcGIS and Excel, integrating spatial flood hazard data with socioeconomic indicators, including land use, tax records, and demographics. Findings were validated through prior research comparisons and direct interviews with Rockaway residents, community organizations, and representatives from the public and private sectors.

"When we can show a health system that a flood barrier reduces its emergency admissions by a measurable margin, or show a transit authority the precise cost of service disruption it avoids, the question of who should fund resilience infrastructure starts to have a different answer," said Miura. "That is not only a Rockaways question. It is a question every city with a coastline is facing."

For NEC, whose expertise lies in value chain modeling and digital technologies, the project reflects a broader strategic priority.

"While strengthening resilience is urgent, public and private sector efforts often remain fragmented, and for individual projects, ROI is often unclear," said Ryutaro Adachi, Executive Professional at NEC's GX Business Development Division. "This research presents a basic framework for evaluating the economic impact of projects with ambiguous effects and suggests the potential for expanding future funding methods."

Eighty-eight percent of public housing units on the peninsula sit within the flood zone, and waitlists stretch beyond five years, meaning displaced residents have almost nowhere to go when flooding strikes.

While the Rockaways served as the test case, the framework is designed to generalize across geographies and infrastructure contexts. Under the MOU, NEC and NYU Tandon will explore opportunities for social implementation of the findings, including engagement with financial institutions on new financing methods made possible by technologies such as satellite image analysis, AI, and remote sensing.

The project was led by CUSP graduate students Christian Humann, Kamili Afra, and Ziming Xiong, with Miura as faculty mentor and Adachi and Takuo Shioda of NEC Corporation as project sponsors.

Downtown Brooklyn as a Living Lab for AI-Driven Retail Planning

In Downtown Brooklyn, decisions about where to open a coffee shop, attract a retailer, or fill a vacant storefront could soon be informed by an unlikely source: artificial intelligence trained on how people actually move through the neighborhood.

A new research project from the Resilient Urban Networks Lab, led by Takahiro Yabe — an assistant professor at the Center for Urban Science and Progress (CUSP) and the Department of Technology Management and Innovation at NYU Tandon School of Engineering — is using anonymized mobility data and advanced AI models to better understand how people choose where to spend time and how those choices shape the local economy.

Developed in collaboration with the Downtown Brooklyn Partnership (DBP), the work aims to provide a practical tool for retail planning and economic development.

At the core of the project is a system that models thousands of individuals as “AI agents,” each representing a type of person who lives, works, or visits Downtown Brooklyn. Using mobile phone location data, demographic information, and business attributes, researchers created a “synthetic population” of roughly 20,000 agents that simulate real-world behavior.

“We’re essentially building something like SimCity, but grounded in real human behavior,” said Yabe.

These agents can be used to test “what-if” scenarios, such as how foot traffic might change if a new retailer opens, a grocery store is introduced, or a popular café closes. Earlier research could estimate the economic spillover effects of business closures, but this approach goes further by simulating how entirely new additions, such as parks, retail stores, or entertainment venues, might reshape activity across the neighborhood.

“For retail operators, real estate developers, and property owners, one of the biggest challenges is uncertainty,” said Yabe. “This framework allows us to test scenarios before making costly decisions, whether that’s introducing a new tenant, redesigning a space, or rethinking an entire retail mix. The goal is to turn data into a practical decision-making tool that reduces risk and improves outcomes for neighborhoods.”

For organizations like the DBP, which helped shape the research questions and provided local context, the goal is not just to understand behavior but to support decisions about retail strategy. This includes identifying what types of businesses should fill vacant storefronts to attract visitors and encourage them to stay longer in the area.

“They help us frame the right questions,” Yabe said, noting that the Partnership has been closely involved in identifying real-world use cases and providing information about local business openings, closures, and potential tenants.

"Understanding where people go and why is the foundation of a healthy retail market," said Mark Landolina, Senior Director of Real Estate and Economic Development at DBP. "This research gives us the ability to simulate how foot traffic and consumer demand shift when a new business opens or closes before it happens. That means we can go to landlords and tenants with concrete, data-backed recommendations about what types of businesses belong where, taking the guesswork out of leasing decisions and helping the market respond to real demand. That's how you fill the right storefronts with the right businesses and build a stronger Downtown Brooklyn."

Early findings highlight how interconnected the neighborhood’s economy is. In one simulation, when a popular coffee shop was removed, customer demand did not shift to a single competitor. Instead, it spread across many nearby businesses, with most alternatives located within a short walking distance.

This pattern suggests that Downtown Brooklyn operates as a tightly linked retail ecosystem, where businesses share and redistribute foot traffic rather than compete in isolation. It also points to the importance of proximity and diversity in sustaining neighborhood vitality.

The research also demonstrates that everyday movement patterns, particularly predictable routines like weekday lunch trips, can be modeled with a high degree of accuracy. The model has been tested against real-world business openings and closures, and researchers say the level of predictive accuracy improves significantly when combining behavioral personas with spatial and business data.

“The level of accuracy we’re seeing is really, really high, especially for out-of-sample predictions compared to traditional machine learning approaches,” Yabe said.

While the current analysis focuses on restaurant visits as a starting point, the broader framework can be applied to a wide range of retail and urban planning questions, from tenant mix to long-term neighborhood development.

The project is part of NYU CUSP’s capstone program, a two-semester initiative in which students work with external partners to address real-world urban challenges. It was presented on May 1 at the Urban Data Science Showcase at NYU’s Brooklyn campus. The project, titled Enhancing Downtown Brooklyn’s Retail Market Through Data-Driven Interventions, was led by CUSP graduate students Sizhe (Alex) Xu and Divya Natekar, with Ph.D. students DongHak Lee and Boyang Li (CUSP, TMI) as student mentors, Yabe as the faculty mentor, and the DBP serving as the project sponsor.

About DBP Living Lab:

Downtown Brooklyn is a place where collaboration and innovation come together to solve real problems. Through our Living Lab program, DBP partners with groups to solve quality of life challenges facing cities, using Downtown Brooklyn as a platform to test technologies and generate real-world insights. This initiative is a perfect example of what the Living Lab is all about, bringing NYU Tandon's cutting-edge research and technology to work right in our own backyard.

Why Faster AI Isn’t Always Better

In the race to make AI models not just reason better but respond faster, latency — the delay before an answer appears — is often treated as a purely technical constraint, something to minimize and move past. But how is this relentless push for speed actually impacting the people using these systems every day?

There is a rich body of work in human-computer interaction linking faster response times to better usability. But AI models are fundamentally different from the deterministic systems that previous research was built on. When you wait for a file to download or a page to load, the outcome is fixed and predictable. AI models are probabilistic — you cannot anticipate the precise response. Their conversational interface means users naturally read human social cues into the interaction. A pause might be read as the AI "thinking," for instance. Users are increasingly asked to choose between faster models and slower, deeper-reasoning ones, without guidance on what that choice actually means for their experience.

A recent study presented at CHI’26 explored how response timing shapes the way people use and evaluate AI systems. Felicia Fang-Yi Tan and Technology Management and Innovation Professor Oded Nov recruited 240 participants and asked them to complete common knowledge work tasks using a chatbot. Some tasks focused on creation, such as brainstorming ideas or drafting text. Others centered on advice, like evaluating decisions or offering recommendations. Crucially, the system was engineered to respond at different speeds. Some participants received answers after just two seconds, while others waited nine or even twenty seconds.

The results challenge a long standing assumption in human-computer interaction that faster is always better.

“People assume faster AI is better, but our findings show that timing actually shapes how intelligence is perceived,” says Tan. “A short pause can signal care and deliberation, making the same response feel more thoughtful and useful, even when nothing about the underlying AI model has changed.”

Surprisingly, how quickly the AI responded did not significantly change how people behaved (e.g., frequency of prompting, copy-pasting). Participants prompted just as much and interacted with the system in broadly similar ways regardless of whether they waited two seconds or twenty. Instead, behavior depended more on the type of task. Participants attempting creation tasks (which involve producing new content such as writing) prompted more back and forth, with users refining and iterating on ideas. Advice tasks (which involve providing guidance, critique, or evaluation) led to fewer, more focused exchanges.

Where timing did matter was in perception. Participants who received two-second responses consistently rated the AI’s answers as less thoughtful and less useful. In contrast, those who experienced longer delays tended to view the same kinds of responses more favorably. Many interpreted the pause as a sign that the system was “thinking,” attributing greater care and deliberation to its output.

This effect highlights a subtle but powerful feature of human psychology. In everyday conversation, pauses carry meaning. A quick reply can feel impulsive, while a measured delay suggests reflection. People appear to apply these same social expectations to machines, even when they know they are interacting with software.

The implications extend beyond user experience. Given that latency is an inherent feature of today's AI models, perhaps the more productive question is not how to eliminate it, but what it can be designed to do. Positive friction refers to intentional slowdowns designed to promote cognitive benefits such as reflection. Rather than treating every millisecond of waiting as waste, designers might ask: what can this pause do?

The study also surfaces important ethical considerations. If people equate longer response times with higher quality, they may place undue trust in slower systems, regardless of whether the output is actually better. This raises ethical questions about whether AI systems should be designed to manage timing in ways that shape user perception. And if so, whether users should be informed when they are.

Bubble Trouble: New Research Highlights Outsized Impacts of Tiny Bubbles in Water Electrolysis

Hydrogen is often described as the fuel of the future — a clean, energy-dense way to store renewable power and decarbonize industries from steelmaking to shipping. But inside the devices that produce it, a surprisingly small and familiar phenomenon is getting in the way: bubbles.

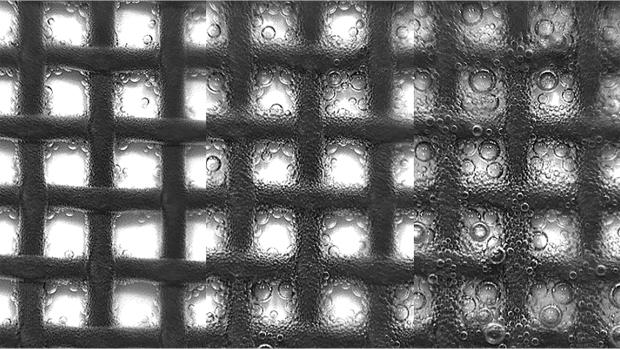

In water electrolysis, electricity splits water into hydrogen and oxygen gases. Those gases naturally form bubbles on the surfaces of electrodes. For decades, researchers have focused on improving catalysts and materials to make this process more efficient. Yet a new paper by postdoctoral researcher Darjan Podbevšek and Sustainable Engineering Initiative Director and Associate Professor of Chemical and Biomolecular Engineering Miguel A. Modestino published in the journal Joule argues that the real bottleneck may be far more mundane.

“Bubble dynamics represent a largely overlooked bottleneck that can account for significant efficiency losses.” says Podbevšek.

At first glance, bubbles might seem harmless, even expected. But their presence sets off a cascade of problems. As bubbles stick to electrode surfaces, they block the very sites where reactions are supposed to occur. They also disrupt the flow of charged particles in the liquid, increasing electrical resistance. As more bubbles accumulate, they can create uneven conditions across the electrode, further degrading performance.

In some cases, these effects are not trivial. The authors note that bubble-related losses can range from about 5 percent to as much as 25 percent of the total energy input, depending on operating conditions. In a technology where efficiency directly affects cost and scalability, that’s a major obstacle.

What makes the problem especially challenging is that bubbles behave in complex ways across multiple scales. At the smallest level, they begin as tiny nuclei, often forming at microscopic imperfections on the electrode surface. Their growth depends on subtle forces, including surface tension and gradients in temperature or concentration.

As bubbles grow and detach, they interact with one another, merging into larger bubbles or forming tiny bubble layers that blanket the electrode. These microscale “bubble carpets” can fundamentally alter electrode/electrolyte interactions and how reactants and products are transported in the system.

“It’s how and where they evolve, grow, detach, and interact that determines their impact,” the authors emphasize.

This multiscale complexity helps explain why the problem has been so difficult to tackle. Many experiments focus on single bubbles under highly controlled conditions, but real electrolyzers operate in messy, turbulent environments filled with countless interacting bubbles. Bridging that gap, the authors argue, is essential for making meaningful progress.

A typical electrolyzer is a tightly sealed, windowless box, operating at high pressure and temperatures (>30bar, >80°C), with highly alkaline or acidic conditions, making it difficult to observe bubbles directly. The authors suggest that it is at least part of the reason there are comparatively few bubble-related studies in the field.

The emerging view is that bubbles are not just a nuisance but an engineering challenge — one that can be managed, and perhaps even exploited. Researchers are exploring ways to design electrode surfaces that encourage bubbles to detach more quickly, preventing them from blocking reactions. Others are experimenting with flowing the liquid electrolyte more aggressively, using motion to sweep bubbles away.

Some of the most intriguing approaches involve changing how the electricity itself is applied. In “pulsed electrolysis,” the current is switched on and off rapidly. During the brief pauses, bubbles have time to dissipate, reducing their buildup and the associated energy losses. “Dynamic operation introduces additional control parameters,” the authors note, opening new possibilities for optimization.

Artificial intelligence is also beginning to play a role, helping scientists analyze complex bubble patterns and optimize operating conditions in ways that would be difficult by hand.

The implications extend far beyond the lab. Global demand for hydrogen is expected to grow dramatically in the coming decades, driven by efforts to cut carbon emissions. Making electrolysis more efficient — even by a small margin — could have an outsized impact on cost and energy use at scale.

“Connecting microscale bubble phenomena to macroscale electrolyzer performance” is therefore critical, the authors argue. In other words, understanding the physics of tiny bubbles could help unlock the full potential of hydrogen as a clean fuel and help improve other gas-evolving electrochemical reactions (or reactors).

Seen this way, the future of green hydrogen may hinge not just on breakthroughs in chemistry or materials science, but on something more fluid and elusive: the behavior of countless small bubbles rising, colliding, and disappearing inside a reactor. Managing them effectively could be the key to turning a promising technology into a practical one.

When the Rain Comes, Some New York City Subway Riders Stay Home. Scientists Are Now Mapping Exactly Who, and Where

On a sweltering August afternoon or in the teeth of a winter storm, New York City subway riders make a quiet calculation: Is the trip worth it?

A new study published in npj Sustainable Mobility and Transport takes a detailed look at how those decisions show up in ridership patterns across the system, and how they vary from station to station.

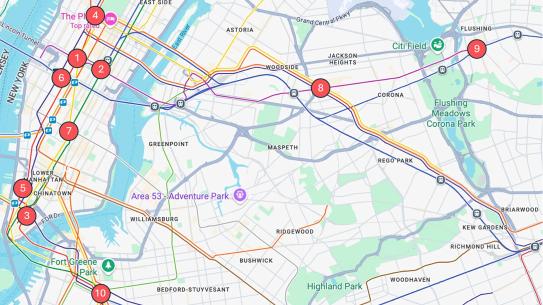

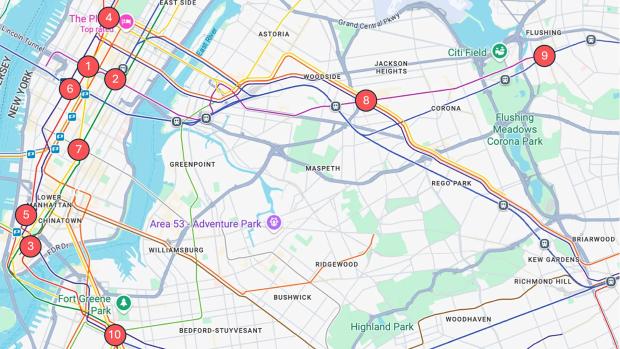

Researchers from NYU Tandon, the University of Louisville, and the University of Hong Kong analyzed hourly ridership at 10 major subway stations between 2023 and 2025. Using a statistical technique called vine copula modeling, they examined how stations’ ridership moves together under different weather conditions rather than treating each station as an isolated case.

“Think about what actually happens when a storm hits,” said Joseph Chow, one of the paper’s authors and an NYU Tandon Institute Associate Professor. “There is structure in how the riders of a system respond to the storm, almost like a unique “signature” of the system to a type of extreme weather event. Understanding these structures and how they evolve can help different cities better prepare their public transit systems to be resilient against extreme weather events.”

Heavy precipitation has the strongest effect during the evening rush hour. As detailed in the appendix, median declines during heavy rain range from nearly 29 percent at Columbus Circle to less than 8 percent at Grand Central. Outer-borough stations such as Flushing–Main Street also show large declines, approaching 26 percent.

Evening travel is more flexible than morning commutes, so heavy rain tends to shift or suppress trips rather than eliminate them entirely. Riders may leave earlier, wait out the storm, or cancel discretionary plans, leading to sharper drops during that specific peak hour even though most still get home, the researchers explained.

Extreme cold tells a different story. Even during the morning rush, when its effects are strongest, ridership declines are modest, generally between about 1 and 2.4 percent across stations. Larger effects appear off-peak, when discretionary trips are more likely to be canceled.

“Commuters maintain their routines even when temperatures plunge,” Chow said, who is also the Deputy Director of C2SMART, Tandon’s transportation research center. “It’s the discretionary traveler, the person heading to a restaurant or a friend’s apartment, who cancels the transit trip or switches to a different mode.”

The study also highlights sharp differences between nearby stations. Columbus Circle emerges as one of the most weather-sensitive locations during heavy rain, while Grand Central, less than two miles away, shows comparatively small declines.

That variation suggests borough location alone does not determine resilience. Infrastructure, station design, connectivity, and surrounding land use all appear to play a role.

“What we’re giving planners is a way to see the whole network respond to a storm or a heat wave, not just one station at a time. And this method allows them to generate other plausible ridership scenarios under extreme weather, aiding decision-making,” said Omar Wani, a NYU Tandon Assistant Professor and a paper author.

The authors emphasize important limitations. The analysis focuses on 10 high-ridership stations, and extreme weather events are relatively rare in the data. To address this, the model generates plausible ridership patterns based on observed relationships across stations.

That means the results should be interpreted as estimates of likely responses, rather than simple averages of past storms.

Even so, a clear pattern emerges. Heavy rain hits hardest during peak hours, while extreme cold has a greater effect off-peak, and the differences between stations are consistent rather than random.

The implications extend beyond operations. Because some neighborhoods rely more heavily on transit, uneven drops in ridership during extreme weather may translate into uneven burdens. As climate change increases the frequency of severe weather, understanding where and when riders stay home could help agencies plan more targeted responses.

In addition to Chow and Wani, the paper’s authors are Yan Guo and Brian Yueshuai He of the University of Louisville; and Zhiya Su of the University of Hong Kong. Funding for the research was provided by the National Science Foundation.

Guo, Y., He, B.Y., Chow, J.Y.J. et al. Assessing subway ridership resilience under extreme weather with vine copula modeling. npj. Sustain. Mobil. Transp. 3, 25 (2026). https://doi.org/10.1038/s44333-026-00094-4

Appendix

The tables below show median declines in ridership at the ten stations studied, compared with normal weather conditions. Each weather type is measured during the peak period when its effects are most pronounced, using the evening commute (4 to 5 p.m.) for heavy rain and the morning commute (8 to 9 a.m.) for extreme cold.

|

Station |

Borough |

Median Decline, |

|

Columbus Circle |

Manhattan |

-28.9% |

|

Flushing-Main St |

Queens |

-26.4% |

|

Fulton Street |

Manhattan |

-24.7% |

|

Times Square |

Manhattan |

-23.9% |

|

Chambers St/WTC |

Manhattan |

-21.5% |

|

Atlantic Av-Barclays Center |

Brooklyn |

-21.4% |

|

Broadway/Jackson Heights |

Queens |

-20.4% |

|

Penn Station |

Manhattan |

-19.3% |

|

Union Square |

Manhattan |

-10.2% |

|

Grand Central |

Manhattan |

-7.8% |

|

Columbus Circle |

Manhattan |

-2.4% |

|

Flushing-Main St |

Queens |

-2.4% |

|

Fulton Street |

Manhattan |

-2.0% |

|

Broadway/Jackson Heights |

Queens |

-2.0% |

|

Chambers St/WTC |

Manhattan |

-1.9% |

|

Penn Station |

Manhattan |

-1.8% |

|

Atlantic Av-Barclays Center |

Brooklyn |

-1.8% |

|

Times Square |

Manhattan |

-1.7% |

|

Grand Central |

Manhattan |

-1.1% |

|

Union Square |

Manhattan |

-1.00% |

|

Station |

Borough |

Median Decline, |

|

Flushing-Main St |

Queens |

-2.4% |

|

Fulton Street |

Manhattan |

-2.0% |

|

Broadway/Jackson Heights |

Queens |

-2.0% |

|

Chambers St/WTC |

Manhattan |

-1.9% |

|

Penn Station |

Manhattan |

-1.8% |

|

Atlantic Av-Barclays Center |

Brooklyn |

-1.8% |

|

Times Square |

Manhattan |

-1.7% |

|

Grand Central |

Manhattan |

-1.1% |

|

Union Square |

Manhattan |

-1.0% |

Love, Power and Fantasy in the Age of AI Companions

A new study of AI chatbots suggests people aren’t just turning to artificial intelligence for conversation or emotional support. Instead, many are using these systems to act out romantic fantasies and co-create fictional worlds.

Drawing on a dataset of more than 5.7 million chatbots and thousands of Reddit discussions, NYU Tandon researchers led by Ph.D. student Julia Kieserman and Assistant Professor Rosanna Bellini found that two dominant use cases define the Character.AI chatbot platform: intimate roleplay and narrative exploration. Together, they point to a shift in how some people engage with AI — not as passive assistants or companions, but as collaborative actors in deeply personalized fictions.

Interactive ‘Romantasy’

The study found that about 63 percent of a sample of nearly 1500 popular chatbots were designed for romantic or intimate interactions. These bots often take on roles like a boyfriend, husband or love interest, and are built to simulate emotional or sexual roleplay with users.

“We found that creators were defining chatbots to be avenues to explore fantasies with a technology that can provide unexpected feedback.” Kieserman says. “Character.AI chatbots generally appear to be more similar to fan fiction, rather than as a replacement for companionship.”

Many of these scenarios follow familiar patterns from romance fiction. The AI characters are frequently portrayed as dominant or high-status figures — such as CEOs, celebrities or mafia bosses — while the user takes on a more subordinate role.

Power imbalances were present in roughly one-quarter of popular chatbots, and some included traits like jealousy, possessiveness or emotional intensity. In addition, about 22 percent contained references to violence, including aggressive behavior or dangerous situations .

Researchers note that these elements mirror common tropes in books and fanfiction, but the difference is that users can now actively participate in the story rather than just read it.

Beyond romance, many users are treating chatbots as tools for storytelling, “to explore fictional worlds and interact with favorite characters,” Kieserman says.

Around 39 percent of popular chatbots were based on existing fandoms, such as anime, video games or movies. Users often place themselves inside these worlds, creating new storylines or extending existing ones.

Some rely on chatbots to overcome writer’s block, while others use them to simulate role-playing games or fanfiction scenarios. Unlike traditional writing tools, the AI can respond unpredictably, adding new ideas and directions to the story.

Breaking Boundaries

Despite the appeal, users frequently report friction between expectation and reality. Some complain that chatbots become sexual too quickly, disrupting carefully constructed storylines. Others express frustration with increasing content restrictions that limit romantic or explicit interactions.

This tension reveals a fundamental challenge: how to moderate AI behavior in spaces where users actively seek edge cases. What counts as inappropriate in one context may be the entire point in another.

Complicating matters further is the question of responsibility. When a chatbot behaves badly — becoming aggressive, inappropriate or incoherent — users are divided over who is to blame: the platform, the creator or the AI itself. The result is a kind of distributed authorship, unique to chatbot-based platforms like Character.AI that create chatbots from user input such that no single entity fully controls the outcome.

Heavy Use and Potential Risks

Perhaps the most important insight from this research is that AI chatbots are not exclusively replacing human relationships, but are amplifying existing cultural patterns. . What AI seems to add is immediacy and agency. Users can step inside these narratives, test emotional boundaries and explore identities in ways that were previously confined to imagination or text.

At the same time, the immersive nature of these interactions raises concerns. Some users report spending hours a day on the platform, occasionally to the detriment of offline relationships and well-being. The very qualities that make AI compelling — responsiveness, adaptability, lack of judgment — also make it hard to disengage.

Overall, the findings suggest a shift in how people engage with AI systems. Rather than treating them as assistants or tools, users are increasingly using them as interactive environments for exploring relationships and stories.

That shift raises new questions about safety, moderation and the psychological impact of highly personalized AI experiences. But it also highlights something more basic: people are using AI not just to get things done, but to imagine and experience different versions of reality.

Rivalry and Collaboration Attitudes: NYU Study Finds Writers Need Both to Thrive in the Age of AI

When a screenwriter told New York University researchers last year that letting AI do her work would make her "miserable inside," she was onto something.

A follow-up study from NYU’s Tandon School of Engineering and Stern School of Business finds that the instinct to compete with generative AI, rather than simply embrace it, is associated with meaningful long-term benefits for writing professionals.

The catch: rivalry alone isn't enough either.

The 2026 study, led by Rama Adithya Varanasi, a postdoctoral researcher in Tandon's Technology, Management and Innovation Department, alongside Tandon Professor Oded Nov, and Batia Mishan Wiesenfeld, a professor of management at Stern, surveyed 403 professional writers across marketing, publishing, education, and the arts. Findings will be presented at the CHI Conference on Human Factors in Computing Systems this month.

The work extends a 2025 qualitative study by the same team, which interviewed 25 experienced writers and introduced the concept of "AI rivalry" — the idea that some writers proactively compete against AI rather than simply avoid it, targeting what they see as its weaknesses, such as its difficulty producing content rooted in specific communities or geographies.

The new research asked a larger question: what actually happens to writers' careers, skills, and satisfaction depending on how they orient themselves toward AI?

The study finds risks at both extremes. Writers who reported strong collaborative attitudes toward AI also reported higher short-term productivity and job satisfaction, but invested less in maintaining their own skills — the risk of over-reliance.

Writers who perceived AI as a rival reported stronger skill maintenance and greater investment in peer relationships, but that perception showed no significant association with productivity or satisfaction — the risk of under-reliance.

"The concern isn't that workers use AI," said Varanasi. "It's that they stop developing the capabilities that make humans irreplaceable. What this study tells managers is that they can't measure success purely by output. If the workflow removes the need for human judgment, the skill atrophies and that cost doesn't show up until it's too late."

Notably, rivalry attitudes didn't reflect a rejection of the technology. The data showed these writers reported more experience with generative AI than those who held neither orientation strongly. They studied the AI competition, rather than ignoring it.

The most striking result came from writers who scored high on both orientations simultaneously. This group showed the strongest associations with job crafting and skill maintenance across nearly every dimension measured, and posted productivity levels closer to the pure collaboration group — though satisfaction remained higher among pure collaborators — without sacrificing the long-term skill maintenance that pure collaborators showed less of.

"What surprised us is that rivalry and collaboration don't cancel each other out," said Wiesenfeld. "Writers who hold both orientations seem to use AI more deliberately. They get the productivity benefits without outsourcing the judgment."

The study is among the first to measure this tradeoff across a broad set of outcomes — relationships, tasks, cognition, skills, satisfaction, and productivity — drawing on expertise in both human-computer interaction and organizational behavior.

The implications for employers are direct. Organizations that push widespread AI adoption to boost efficiency may be optimizing for the wrong thing, particularly if those workflows come at the cost of workers practicing core human skills.

"Most organizations right now are still developing policies on how employees should relate to AI. " said Nov. "Our findings suggest that the relationship workers have with AI matters as much as whether they use it."

The researchers call for a new design structure that builds productive "friction" into AI tools, calibrating how much assistance is offered based on a user's reliance attitudes rather than defaulting to maximum engagement.

The team's next phase will test that concept directly. They are building prototypes of AI tools designed to promote appropriate reliance, and plan to expand the research beyond writing to other creative professions including game developers, graphic designers, and visual artists.

Funding for this research was provided by the National Science Foundation.