Love, Power and Fantasy in the Age of AI Companions

What thousands of chatbot interactions reveal about how people are rewriting intimacy and storytelling

A new study of AI chatbots suggests people aren’t just turning to artificial intelligence for conversation or emotional support. Instead, many are using these systems to act out romantic fantasies and co-create fictional worlds.

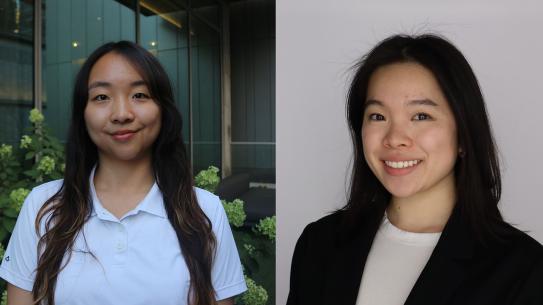

Drawing on a dataset of more than 5.7 million chatbots and thousands of Reddit discussions, NYU Tandon researchers led by Ph.D. student Julia Kieserman and Assistant Professor Rosanna Bellini found that two dominant use cases define the Character.AI chatbot platform: intimate roleplay and narrative exploration. Together, they point to a shift in how some people engage with AI — not as passive assistants or companions, but as collaborative actors in deeply personalized fictions.

Interactive ‘Romantasy’

The study found that about 63 percent of a sample of nearly 1500 popular chatbots were designed for romantic or intimate interactions. These bots often take on roles like a boyfriend, husband or love interest, and are built to simulate emotional or sexual roleplay with users.

“We found that creators were defining chatbots to be avenues to explore fantasies with a technology that can provide unexpected feedback.” Kieserman says. “Character.AI chatbots generally appear to be more similar to fan fiction, rather than as a replacement for companionship.”

Many of these scenarios follow familiar patterns from romance fiction. The AI characters are frequently portrayed as dominant or high-status figures — such as CEOs, celebrities or mafia bosses — while the user takes on a more subordinate role.

Power imbalances were present in roughly one-quarter of popular chatbots, and some included traits like jealousy, possessiveness or emotional intensity. In addition, about 22 percent contained references to violence, including aggressive behavior or dangerous situations .

Researchers note that these elements mirror common tropes in books and fanfiction, but the difference is that users can now actively participate in the story rather than just read it.

Beyond romance, many users are treating chatbots as tools for storytelling, “to explore fictional worlds and interact with favorite characters,” Kieserman says.

Around 39 percent of popular chatbots were based on existing fandoms, such as anime, video games or movies. Users often place themselves inside these worlds, creating new storylines or extending existing ones.

Some rely on chatbots to overcome writer’s block, while others use them to simulate role-playing games or fanfiction scenarios. Unlike traditional writing tools, the AI can respond unpredictably, adding new ideas and directions to the story.

Breaking Boundaries

Despite the appeal, users frequently report friction between expectation and reality. Some complain that chatbots become sexual too quickly, disrupting carefully constructed storylines. Others express frustration with increasing content restrictions that limit romantic or explicit interactions.

This tension reveals a fundamental challenge: how to moderate AI behavior in spaces where users actively seek edge cases. What counts as inappropriate in one context may be the entire point in another.

Complicating matters further is the question of responsibility. When a chatbot behaves badly — becoming aggressive, inappropriate or incoherent — users are divided over who is to blame: the platform, the creator or the AI itself. The result is a kind of distributed authorship, unique to chatbot-based platforms like Character.AI that create chatbots from user input such that no single entity fully controls the outcome.

Heavy Use and Potential Risks

Perhaps the most important insight from this research is that AI chatbots are not exclusively replacing human relationships, but are amplifying existing cultural patterns. . What AI seems to add is immediacy and agency. Users can step inside these narratives, test emotional boundaries and explore identities in ways that were previously confined to imagination or text.

At the same time, the immersive nature of these interactions raises concerns. Some users report spending hours a day on the platform, occasionally to the detriment of offline relationships and well-being. The very qualities that make AI compelling — responsiveness, adaptability, lack of judgment — also make it hard to disengage.

Overall, the findings suggest a shift in how people engage with AI systems. Rather than treating them as assistants or tools, users are increasingly using them as interactive environments for exploring relationships and stories.

That shift raises new questions about safety, moderation and the psychological impact of highly personalized AI experiences. But it also highlights something more basic: people are using AI not just to get things done, but to imagine and experience different versions of reality.