The virtuous circle of AI and games at the Game Innovation Lab

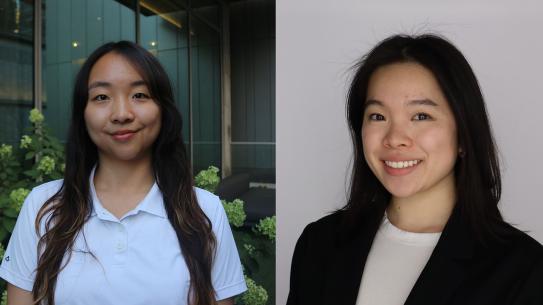

Doctoral students Philip Botrager and Michael Cerny Green with Game Innovation Lab Director Julian Togelius, professor of computer science and engineering

If one were to draw a Venn diagram with a domain for games and a domain for computer science/ artificial intelligence (AI), the overlap would be huge. The Game Innovation Lab at NYU Tandon is mining that fertile ground by exploring the symbiotic relationship between video and digital games and AI. The implications go far beyond avatars and joysticks: the work done at the Lab could lead to profound innovations in automated systems able to run processes for everything from building HVAC systems to global shipping.

The Lab, under the leadership of Julian Togelius — professor of computer science and engineering and one of the founders of the Copenhagen-based AI startup Modl.ai — is a hotbed of research into how games can help deep neural networks learn and how AI can help game developers automate expensive and time-consuming aspects of game development — and make new types of games possible.

Since the beginning of computing, games have been both a training ground and a benchmark for its capabilities. Now, with AI systems like AlphaGo and DeepBlue beating the daylights out of human players, it may seem as if AI and games have gone about as far as they can go in their dance of mutual benefit.

Not so, asserts Togelius, who was honored with an IEEE 2020 Outstanding Early Career Award for his contributions to the field of computational intelligence and games. He explains that while deep learning systems can become nearly perfect at playing one game, few can generalize — applying to other contexts what they have “memorized” about a certain game. In other words, AlphaGo can’t play checkers (at least not without extensive re-engineering).

“The key is using reinforcement learning to teach AI to learn what might be called general game abilities,” says Togelius. “We have been able to improve their ability to do so by adding procedural algorithms, those that generate game levels so as to force the reinforcement learning algorithms to play more generally.”

The researchers’ work on how to use automatic generation of gameplay and levels to create more general game playing has put the Lab at the vortex of a virtuous cycle: smarter, more flexible machine learning systems that can play games can also help design them level by level, as well as personalize the playing experience for each player in real-time and automatically generate tutorial content.

All of which could be a holy grail for game makers, who might spend upwards of $100 million to develop and market a game, with a lot of that money going into adjuvant processes like game levels and tutorials.

Size Isn’t Everything

A key finding by Togelius and collaborators is that deep networks trained with reinforcement learning, a very popular training method, are very bad at generalizing to new situations. In a paper last year aptly titled “Playing Atari with Six Neurons,” Togelius and collaborators found that surprisingly small artificial neural networks can learn sophisticated strategies, especially if they don’t have to perform visual preprocessing. The research garnered a best paper award at the 2019 International Conference on Autonomous Agents and Multiagent Systems (AAMAS), one of the world’s premier AI conferences.

“Many gameplay programs have thousands of neurons,” says Togelius. “Using just six we demonstrated that the strategies that AI employs can’t be very hard because you can do it with very small networks if you separate out the visual preprocessing. It’s sort of a shocking result because you might wonder what these hundreds of thousands of neurons are doing in a larger network.”

Graduate students at the Game Innovation Lab are involved in an array of projects aimed at teaching artificial neural networks to learn games, help build them, and teach them. Included among the many projects are:

- International collaborations and competitions applying AI to game generation: Last year the Game Innovation Lab launched a global event, The GDMC (Generative Design in Minecraft) Competition to use AI to build settlements in the massively popular game Minecraft.

- Superstitious AI: A recent paper, “Superstition” in the Network: Deep Reinforcement Learning Plays Deceptive Games, by lead author Philip Bontrager, a Ph.D. student at the Lab, showed how a general video game framework, a sophisticated reinforcement learning program, could be made to perform illogical, repetitive acts that have no adaptive value because, in essence, they are “fooled” into thinking they are being rewarded for an action.

- Ruben Rodriguez is leading research on how Generative Adversarial Networks (GANs), a kind of artificial neural network, can generate game levels that are actually aesthetically appealing and playable, as well as pedagogical (since a good game level design also teaches players to advance). The team is trying to artificially create these learning experiences — critical as the level generation algorithm is also useful for making the gameplay algorithm better and to generate tutorials.

- Research by doctoral students Ahmed Khalifa and Michael Cerny Green aims to apply AI to creating game levels that are not only playable but also revolve around specific physical actions in the game, such as jumping and leaping (called “mechanics”). They use evolutionary and quality-diversity algorithms to generate small sections of Super Mario Bros levels called “scenes” by taking three different simulation approaches to enrich gameplay.

“All of this work is being done in a generalized game framework,” explains Green. “Neural networks are very good at memorizing stuff efficiently and playing the game they are trained on almost perfectly. But most of our work involves teaching neural networks to do more than what they are trained on. The idea is to rebuild the architecture of a network and develop training strategies to allow it to apply knowledge to a broader range of possibilities, to apply what it’s learned to more than it ‘knows.’”

Beyond Games

Deep learning goes a lot further than games, if those AI systems have the capacity to swap one context for another. As Togelius explains, the mechanicals and rubric of a highly complex video game like Starcraft are a lot like the real-world architectures behind everything from global logistics businesses to industrial processes.

“Starcraft is a lot like running a company, getting the right thing to the right place at the right time. It’s a great model of some processes we can use for developing algorithms,” he says.

“Other more obvious applications are first-responder training, or, for autonomous cars in the automotive industry, AI trained on driving and racing games applied to simulations for self-driving cars.”

Closer to home, Togelius applied the same kinds of GANs the Lab deploys for level generation and tutorials in games — such as Super Mario Brothers — to a project called MasterPrints, led by Nasir Memon, professor of computer science and engineering, and Arun Ross of Michigan State University. MasterPrints exposed profound security vulnerabilities in the fingerprint identification systems used in most smartphones.

DeepMasterPrints, led by Togelius and Bontrager, the lead author of the paper, took it a step further: the researchers were the first to combine a GAN with an evolutionary algorithm (or Latent Variable Evolution, which uses mechanisms inspired by biological evolution) to train the GAN to match a large number of prints stored in fingerprint databases. By doing so they were able to create a print that, like a locksmith’s master key, could theoretically unlock a large number of devices. Bontrager presented the work at the IEEE International Conference of Biometrics: Theory, Applications and Systems, where it won the Best Paper Award.

“The paper had such impact partly because nobody had thought of combining GANS and evolutionary algorithms,” says Bontrager.

A member of the Future Labs at NYU Tandon, Origen.AI, which counts Togelius as a chief scientific advisor, is also applying game-type AI to real-world contexts: the startup, founded and led by Rodriguez is focused on increasing energy efficiency by reducing carbon emissions. Among other things, the company develops methods for optimizing output drilling operations, where the very complex work of analyzing geological data to identify optimal drilling is time-consuming and costly, yet extremely important.

“There are methods for doing these calculations but they are extremely slow; even with the best computers it takes hours or days,” says Rodriguez. “But we can employ the same methods we use to predict the future situation in a game like Starcraft to do predictive modeling on drilling operations. We can predict what is going to happen if we drill new wells, pump oil injects water and all of this turns to be very much like playing a game.”