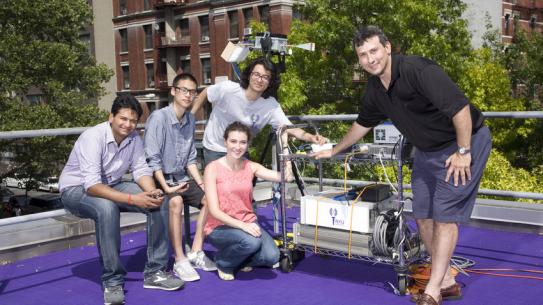

Prof. Giuseppe Loianno is an assistant professor at the New York University and director of the Agile Robotics and Perception Lab (https://wp.nyu.edu/arpl/) working on autonomous Micro Aerial Vehicles. Prior to NYU he was a lecturer, research scientist, and team leader at the General Robotics, Automation, Sensing and Perception (GRASP) Laboratory at the University of Pennsylvania. He received his BSc and MSc degrees in automation engineering, both with honors, from the University of Naples "Federico II" in December 2007 and February 2010, respectively. He received his PhD in computer and control engineering focusing in robotics in May 2014. Dr. Loianno has published more than 70 conference papers, journal papers, and book chapters. His research interests include perception, learning, and control for autonomous robots. He received the NSF CAREER Award in 2022 and DARPA Young Faculty Award in 2022. He is recipient of the IROS Toshio Fukuda Young Professional Award in 2022, Conference Editorial Board Best Associate Editor Award at ICRA 2022 and Best Reviewer Award at ICRA 2016. He is also currently the co-chair of the IEEE RAS Technical Committee on Aerial Robotics and Unmanned Aerial Vehicles and juror in several worldwide robotics competitions. He was the program chair of the 2019 and 2020 IEEE International Symposium on Safety, Security and Rescue Robotics and the general chair in 2021. He is in the organizing committee of IROS 2024. He organized a series of seven consecutive successful workshops at the IEEE/RSJ International Conference on Intelligent Robots and Systems (2015-2022). He has created the new International Symposium on Aerial Robotics (ISAR). His work has been featured in a large number of renowned international news and magazines.

- NSF CAREER Award, 2022

- DARPA YFA, 2022

- IEEE/RSJ International Conference on Intelligent Robots and Systems Toshio Fukuda Young Professional Award, 2022

- Best Associate Editor Award, Conference Editorial Board (CEB), IEEE International Conference on Robotics and Automation (ICRA) 2022, Philadelphia, USA

- Best Outstanding Deployed System Finalist, IEEE International Conference on Robotics and Automation (ICRA), 2022, Philadelphia, USA

- Second place NYU Tandon undergraduate summer research program 2022

- Mohamed Bin Zayed International Robotics Challenge, Winner of the 2020 competition

- NIAF National Italian American Foundation Young Investigator Award, 2018, Washington D.C., USA.

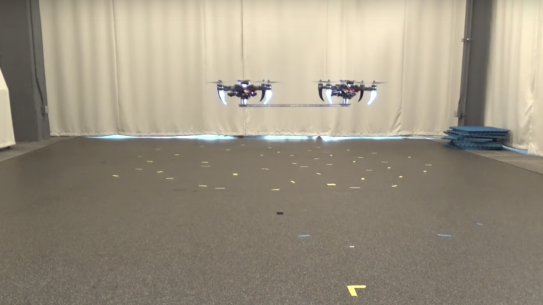

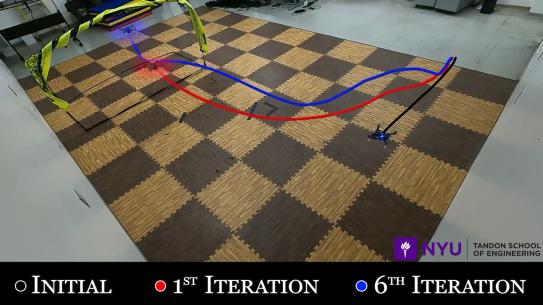

The Agile Robotics and Perception Lab (ARPL) performs fundamental and applied research in the area of robotics autonomy. The main mission of the lab is to create agile autonomous machines that can navigate all by themselves using only onboard sensors such as in unstructured, and dynamically changing environments and without relying on external infrastructure, such as GPS or motion capture systems. The machines need to be active, they should collaborate with humans and between each other and they need to navigate in the unknown environment extracting the best knowledge from it.

For specific project please visit the lab webpage

https://wp.nyu.edu/arpl/research/