Misinformation on Facebook got six times more clicks than factual news during the 2020 election, study says

A new study of user behavior on Facebook around the 2020 election is likely to bolster critics’ long-standing arguments that the company’s algorithms fuel the spread of misinformation over more trustworthy sources.

The forthcoming peer-reviewed study by researchers at New York University (Tandon School of Engineering) and the Université Grenoble Alpes in France has found that from August 2020 to January 2021, news publishers known for putting out misinformation got six times the amount of likes, shares, and interactions on the platform as did trustworthy news sources, such as CNN or the World Health Organization.

This NYU (Tandon) study is one of the few comprehensive attempts to measure and isolate the misinformation effect across a wide group of publishers on Facebook, experts said, and its conclusions support the criticism that Facebook’s platform rewards publishers that put out misleading accounts.

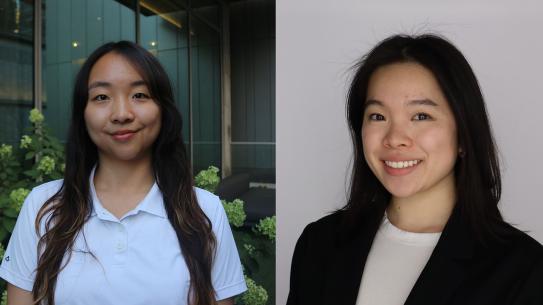

Last month Facebook suspended the accounts of study authors Damon McCoy, professor of computer science and engineering, and Laura Edelson, researcher and Ph.D. candidate, arguing that her data collection—which relied on users voluntarily downloading a software widget that allows researchers to track the ads that they see—put Facebook potentially in violation of a 2019 U.S. Federal Trade Commission privacy settlement.

Edelson said the study showed that Facebook algorithms were not rewarding partisanship or bias, or favoring sites on one side of the political spectrum, as some critics have claimed. She said that Facebook amplifies misinformation because it does well with users, and the sites that happen to have more misinformation are on the right. Among publishers categorized as on the far right, those that share misinformation get a majority—or 68 percent—of all engagement from users.