AI & Local News newsletter, issue 11

In this issue, you’ll read about new developments in AI tech including generative AI, potential federal AI policy, and fake news. As usual there are implications for the news and digital media ecosystem.

I always want to learn more about how journalism engages with tech. If you’ve seen any studies or articles about tech workers at news organizations, send them my way!

Thanks

Matt MacVey

Community & Project Lead, AI & Local News

NYC Media Lab

matt.macvey@nyu.edu

PS If this email was forwarded to you, you can sign up here. Take a look at our newsletter archive to learn more.

AI & Local News Initiative

Associated Press: Learn more about AP’s Local News AI work.

Brown Institute’s Local News Lab: “We have closed out our product discovery work with one final workshop with our 4 very active and engaged partners (AfroLA, Dallas Free Press, Open Vallejo, and The 19th). We are proceeding with our “automating the ask” product and to start, will be focussing on optimizing newsletter calls to actions (or “asks”). We will be starting out with four hypotheses we are going to be testing as possible predictors for our machine learning-powered product. They are:

- If a reader gets to the bottom of a TBD number of stories, might they be more likely to subscribe to a newsletter?

- If a reader’s engagement with the site increases over time, might they be more likely to subscribe to a newsletter?

- If a reader’s visits a site’s homepage directly a number of times, might they be more likely to subscribe to a newsletter?

- If a reader visits an About Us/Support Us page, might they be more likely to subscribe to a newsletter?

We will evaluate these according to both quantitative (e.g. did the number of newsletter sign-ups increase?) and qualitative (e.g. did we learn anything tangible?) metrics.”

NYC Media Lab: “Go in-depth with the work of our AI & Local News Challenge teams in their recently published blog posts. These five teams developed new approaches to use AI and automation in news.

- Information Tracer

- Gannett/USA TODAY Network’s Localizer news automation program

- Overtone

- Building SimPPL for Local News

- Social Fabric”

Partnership on AI: Learn more about PAI’s Local News workstream. If you'd like to get in contact with the PAI team directly and join their Quarterly meeting please reach out to Dalia Hashim, dalia@partnershiponai.org

News

AI & Local News, Democracy, and Innovation

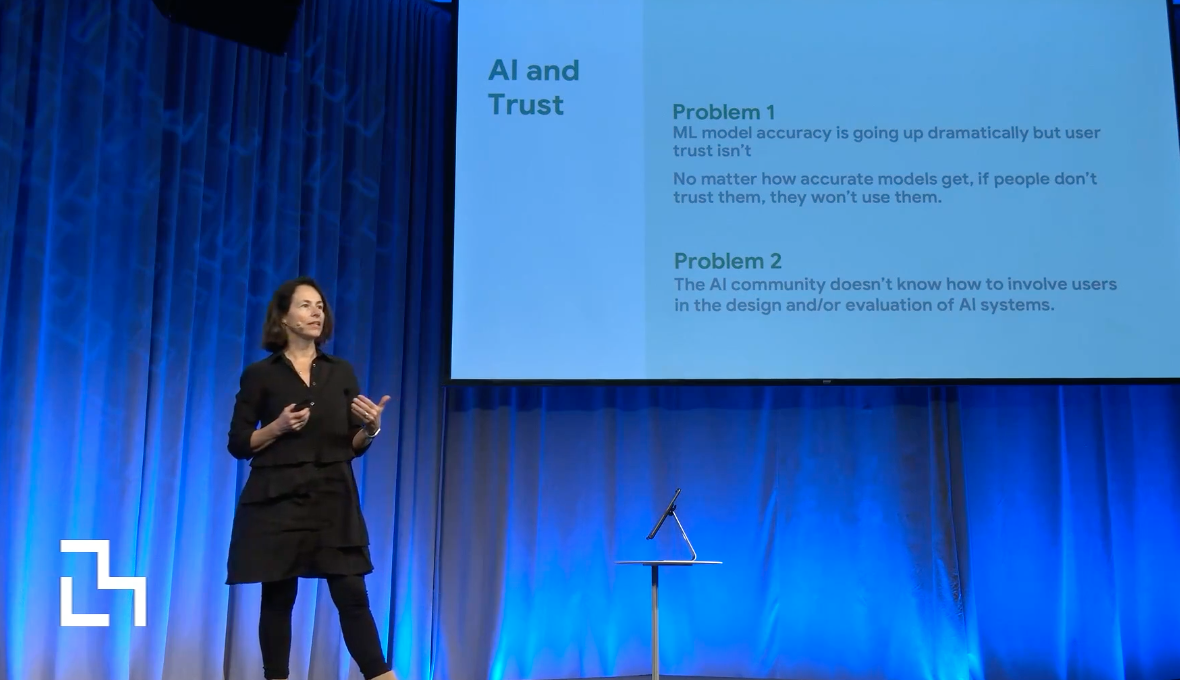

During the 2022 NYC Media Lab Summit, Aimee Rinehart (Program manager for Local News & AI at Associated Press) and Mona Sloane (faculty at NYU’s Tandon School of Engineering, Senior Research Scientist at the NYU Center for Responsible AI, organizer of the Co-Opting AI series, and part of the Gumshoe team) discussed the future of local news and how innovation, automation and AI would deliver quality news to their audiences.

- “We’re confident that AI can take on repetitive tasks to free up resources for more substantive work,” said Rinehart describing the impact of the AP’s local news AI initiative. AP surveyed more than 200 news organizations in all 50 U.S. states about their AI readiness and is currently developing a free curriculum for local newsrooms.

- Sloane presented Gumshoe, a project she is working on with NYU journalism professor Hilke Schellmann designed to help journalists hold the powerful accountable. Using Natural Language Processing (NLP), Gumshoe can help journalists search through massive datasets and better delve into FOIA datasets.

Also, take a look at this presentation from the same event about natural language processing work from a NYC Media Lab New York Times R&D fellow Tuhin Chakrabarty.

How to solve the local news crisis: spend $10 billion a year

Ten billion dollars a year in order to hire 87,000 new journalists for about 1,300 news organizations: a massive investment that might address the huge decline in local news. In a provocative column in The Washington Post, Perry Bacon Jr. writes that “it’s time to just accept that good local news won’t make anyone much money and will need philanthropic and perhaps even government funding.” Bacon adds that younger generations are looking for free articles in a multiplatform format that mixes “text, audio, video and whatever formats emerge in the future. [...] Essentially, we need local versions of outlets like The Post, the New York Times, CNN and NPR — lots of original reporting, accessible in many formats.”

The Washington Post

Who’s going to save us from bad AI?

The U.S. has yet to develop clear guidance on how to protect its citizens against the possible negative outcomes of AI. For this reason - writes Melissa Heikkilä in the MIT Tech Review newsletter The Algorithm - the Biden administration Blueprint for an AI Bill of Rights could be an important pillar to keep tech companies accountable. The AI Bill of Rights outlines five protections Americans should have in the AI age:

- Safe and Effective System. You should be protected from unsafe or ineffective systems.

- Algorithmic Discrimination Protections. You should not face discrimination by algorithms and systems should be used and designed in an equitable way.

- Data Privacy. You should be protected from abusive data practices via built-in protections and you should have agency over how data about you is used.

- Notice and Explanation. You should know that an automated system is being used and understand how and why it contributes to outcomes that impact you.

- Human Alternatives, Consideration, and Fallback. You should be able to opt out, where appropriate, and have access to a person who can quickly consider and remedy problems you encounter.

However, according to this article in Vox, there is something missing from the White House’s AI ethics blueprint. Reporter Sigal Samuel says a law “is what we really need to make AI safe and fair for all citizens.”

MIT Technology Review, Vox

Automating Local News Stories at The Toronto Star

A weekly automated roundup of break-in statistics in Toronto districts published by the Toronto Star is generating discussion online. Nieman Lab staff writer Hanaa’ Tameez explores article automation and speaks with product manager for content at the Toronto Star Cody Gault.

Nieman Lab

Events

Journalism AI Festival

Dates: December 7-8, 2022

Location: Virtual

Research Briefs

Defending Against Neural Fake News

Natural language generation is utilized more and more in summarizing and translating texts and in writing accurate articles. However - as a paper published by the computer scientist Yejin Choi from University of Washington and the Allen Institute for Artificial Intelligence states - “the underlying technology also might enable adversaries to generate neural fake news: targeted propaganda that closely mimics the style of real news.”

The paper presents a model for controllable text generation called Grover that, when given a headline, can generate an article. People find this AI-generated disinformation to be more trustworthy than human-written disinformation. The study found that “the best defense against Grover turns out to be Grover itself, with 92% accuracy, demonstrating the importance of public release of strong generators,” to avoid manipulation.

Choi was selected as a MacArthur fellow in October for her work “using natural language processing to develop artificial intelligence systems that can understand language and make inferences about the world.”

NeurIPS (Conference and Workshop on Neural Information Processing Systems)

Video

Life with AI, a panel about the future

Pattie Maesa and Andy Lippman from the MIT Media Lab hosted a two-hour deep-dive into generative artificial intelligence. Tune in for a great high-level look at the power of AI and different frameworks for looking at what AI is capable of from people at IDEO, Google, Midjourney and more.

Podcast

How AI is helping birth digital humans that look and sound just like us

This episode of In Machines We Trust explores the world of AI-powered replicas of real people that are taking on the jobs of entertainers and law enforcement and more. This new use of artificial intelligence gives rise to interesting applications as well as controversial questions.

MIT Tech Review

Food x Thought

Silicon Valley’s New Craze? Generative A.I. Yes, there’s a new, cool kid in Silicon Valley supplanting VC interest in meta and crypto: Generative A.I. Stability.Ai has raised $101 million in funding to grow the open-source Stable Diffusion AI image generator. Generative A.I. is revolutionizing the way humans might make art, come up with new ideas, and express their creativity. However, these tools are raising new questions about digital copyrights, who should control them, and what influencers and artists might create (which The Information asked a group of 14 creators).

The Cultural Baggage Behind Feminized A.I. From the synths in the UK Channel 4 series Humans (2015-2018) to PAT in the 1999 Disney TV movie Smart House and Hollywood megafilm Her, we imagine a future of conversational feminine A.I. that often perpetuates “limited constructs of femininity as servile and pleasing to the eyes and ears,” writes Dorothy R. Santos in Slate.

Stats of the Month

“By more than 30 percentage points, Americans ages 18 to 34 surveyed were more receptive than those 55 or older when considering AI replacing people working as journalists, hiring managers, trial judges, spiritual advisers or leaders of religious congregations. Respondents ages 35 to 54 were in-between” Media & Technology Survey

(An online poll of 1,000 adults across the United States conducted by the Communication Research Center (CRC) at Boston University’s College of Communication, in partnership with Ipsos, the market research company.)

The initiative is funded by Knight Foundation.

Newsletter produced by Angelo Paura