Fall 2018 Seminars

A complete listing

Silicon-based Integrated Sensors with On-chip Antennas: From THz Pulse Sources to Miniaturized Spectrometers Pulse Sources to Miniaturized Spectrometers

Speaker: Aydin Babakhani, UCLA

Time: 11:00 am - 12:00 pm Sep 5, 2018

Location: 370 Jay Seminar Room 1201, Brooklyn, NY

Abstract: Today’s silicon process technology makes it possible to integrate everything from antennas to processors on a single chip at almost no cost. This creates new opportunities for implementing complex sensors and systems on a millimeter scale. To create such devices, an understanding of physics, waves, electromagnetics, and high-frequency electronics is essential. In this presentation, I will show how the convergence of these fields has resulted in single-chip picosecond pulse radiators, wirelessly synchronized chips with sub-psec synchronization accuracy, miniaturized spectrometers, and wirelessly powered sensors and actuators. In the first section of the talk, I will present techniques for generating and detecting picosecond pulses, based on a novel laser-free Digital-to-Impulse (D2I) radiation. This technology can produce broadband pulses with a record width of 1.9psec that cover a frequency spectrum from 30GHz to 1.1THz and that have a resolution of 2Hz at 1THz. I will discuss how this technology enables us to perform broadband THz spectroscopy, hyper-spectral 3D imaging, and Tbits/sec wireless communication. In the second part, I will present my work on precision time transfer and wireless synchronization of widely spaced chips. This technique eliminates the wires between the elements of a distributed array and makes it possible to build a highly flexible large aperture. In this section, I will also present my work on optical locking of microwave oscillators, which achieves a picosecond timing accuracy over a 1.5m distance. In the third section of the talk, I will focus on miniaturized spectrometers and sensors. I will discuss an Electron Paramagnetic Resonance (EPR) spectrometer that is based on a single-chip full-duplex transceiver for detecting paramagnetic chemicals and free radicals. The EPR sensor technology developed in my laboratory has been successfully deployed in major oil and gas fields in the United States and Canada. This technology is used to monitor the concentration of asphaltenes (a chemical that clogs oil wells) in real-time and to minimize the use of environmentally hazardous chemical inhibitors in energy production. I will further present my recent work on wirelessly powered microchips with on-chip antennas. These microchips are designed to perform sensing, actuation, and localization. I will provide examples of such microchips being used to pace the heart of a sheep and to trigger the leg movement of a rat. Finally, I will discuss the future directions of my research on building wirelessly powered single-chip electronic drugs for medical applications and electronic tracers for energy exploration as well as for industrial monitoring.

About the Speaker: Dr. Babakhani is an Associate Professor in the Electrical and Computer Engineering Department at UCLA and Director of UCLA Integrated Sensors Laboratory. Before joining UCLA, he was an Associate Professor of ECE at Rice University and the Director of Rice Integrated Systems and Circuits Laboratory. He was a Louis Owen Junior Chair Assistant Professor (2016-2017) and Assistant Professor of ECE (2011-2016) at Rice University. He is a member of DARPA Microsystems Exploratory Council (MEC) and a co-founder of MicroSilicon Inc. He received his B.S. degree in electrical engineering from Sharif University of Technology in 2003 and his M.S. and Ph.D. degrees in Electrical Engineering from Caltech in 2005 and 2008, respectively. He was a postdoctoral scholar at Caltech in 2009 and a research scientist at IBM Thomas J. Watson Research Center in 2010. Dr. Babakhani has been awarded multiple best paper awards, including the Best Paper Award at the IEEE SiRF conference in 2016, the Best Paper Award at the IEEE RWS Symposium in 2015, the Best Paper Award at the IEEE IMS Symposium in 2014, and the 2nd-place in the Best Paper Awards at the IEEE APS Symposium 2016 and IEEE IMS Symposium 2016. He has published more than 85 papers in peer-reviewed journals and conference proceedings as well as 21 issued or pending patents. He received a prestigious NSF CAREER award in 2015, an Innovation Award from Northrop Grumman in 2014, and a DARPA Young Faculty Award in 2012.

Physical Human-Robot Interaction in Robotic Neuro-Rehabilitation

Speaker: S. Farokh Atashzar, The University of Western Ontario, Canada

Time: 11:00 am - 12:00 pm Sep 6, 2018

Location: 370 Jay Seminar Room 1201, Brooklyn, NY

Abstract: The incidence rate of age-related neuromuscular disorders and movement disabilities is rapidly increasing worldwide due to an aging society. While better medical care has increased survival rates, it has resulted in more patients in need of neuro-rehabilitation and assessment services. Stroke is the leading cause of motor disabilities. There are more than 6 million stroke survivors living in the United States. About 90% of survivors experience long-term disabilities and often require labor- intensive kinesthetic rehabilitation services, for extended periods. This has placed a significant burden on healthcare systems. The situation is particularly concerning in remote areas where healthcare systems lack sufficient resources to serve the population of patients in need.

A potential solution is to develop smart mechatronic, robotic, and telerobotic technologies that provide safe and effective means of in-hospital and in-home kinesthetic neurorehabilitation and assessment services. In this regard, robotic rehabilitation systems have revolutionized the field of movement therapy. Combining sensorized force-enabled robotic systems with sophisticated virtual and augmented reality environments has provided a unique multimodal framework for neurorehabilitation and for studying sensorimotor characteristics of patients with neuromuscular disorders. Thus, during the last decade, utilization of robotic systems in neurorehabilitation and neuroscience has significantly increased.

Although there are specific advantages to the use of neuro-rehabilitation robotic systems, there still exist several technical and control challenges, such as (a) absence of direct physical interaction between the clinician and the patient who uses the robot, (b) limited accessibility in remote areas, (c) low transparency of the force field generated by the robotic system, and (d) questionable compatibility of the force field with biomechanical characteristics of the patient’s limbs. The latter case relates to the stability (and safety) of robotic rehabilitation systems, and is of particular concern when high rehabilitative forces are needed for severely-disabled patients, when the robot is to be used in a patient’s home, and when the patient experiences involuntary movements (that can mislead the control unit of a robotic system). The above-mentioned challenges will be discussed in this talk, and possible solutions will be presented. In this regard, some research projects focusing on telerobotic rehabilitation systems, stabilizing controllers for physical human-robot interaction, and tremor estimation and cancelation techniques will be introduced. It should be noted that in addition to the use of robots for therapeutic purposes, these systems have a high potential for studying various sensorimotor aspects of neuromuscular disorders. Thus, the talk will be concluded by some brief examples of robots that have been used to better understand neurological movement disorders such as Parkinson’s Disease, Focal Hand Dystonia, and Cerebral Palsy.

This talk will be presented with a multidisciplinary audience in mind. The focus will be on topics related to neuro-rehabilitation robotics. Due to the time limitation, topics related to surgical robotics will not be presented; however, the audience is more than welcome to ask questions regarding top lines of research in the area of surgical robotics as well.

About the Speaker: Dr. S. Farokh Atashzar obtained his Ph.D. degree in Robotics and Control, a program under Electrical and Computer Engineering (ECE) Department at the University of Western Ontario (UWO), Canada, in 2016. Currently, he is a postdoctoral research associate at Canadian Surgical Technologies and Advanced Robotics (CSTAR) center. Farokh joined UWO, in 2011 to pursue his Ph.D. degree under the supervision of Prof. Rajni V. Patel. In 2012 and 2013, he was a doctoral trainee in the NSERC CREATE program in Computer-Assisted Medical Interventions (CAMI). He was a visiting research scholar at the Biorobotic Systems lab, University of Alberta, Canada, in 2014. Since 2015 he has conducted research for the Network of Centres of Excellence (NCE) program on “Aging Gracefully across Environments using Technology to Support Wellness, Engagement and Long Life (AGE- WELL),” focusing on design and control of neuro-rehabilitation robotic systems. Farokh has been the recipient of several prestigious awards including the Ontario Graduate Scholarship (OGS) in 2013, and NSERC Post-Doctoral Fellowship (PDF) in 2018. His national ranking in Canada for NSERC PDF competition was 5. Farokh’s research interests broadly involve topics related to neurorehabilitation robotics, surgical robotics, physical human-robot interaction, advanced nonlinear control systems for robotic systems, vibration control in flexible robots, bio-signal processing, adaptive filters, and advanced machine learning techniques. His research has been reported in more than 28 journal papers, 30 peer- reviewed conference papers, and 2 book chapters. Farokh was the lead organizer for a workshop on “Physical Human-robot and Human-telerobot Interaction: From Theory to Application for Neuro- rehabilitation” in IEEE IROS 2017 conference; and a workshop on “Advanced Intelligent Mechatronics for Neuromuscular Rehabilitation and Recovery Assessment” in IEEE AIM 2016 conference. He was also a technical co-chair of a Symposium on “Advanced Bio-signal Processing for Rehabilitation & Assistive Systems,” in 2017 IEEE GlobalSIP conference, and a co-organizer of the Special Session on “Bio-Signal Processing for Movement Assessment, Neuro-Rehabilitation, and Assistive Technologies,” in 2017 IEEE SMC conference. Farokh is currently serving as the general chair of the symposium on “Advanced Bio-Signal Processing and Machine Learning for Medical Cyber-Physical Systems,” in 2018 IEEE GlobalSIP conference, Anaheim, CA, USA. He is also a guest associate editor of the special issue on “Intelligent Human-Robot Interaction for Rehabilitation & Assistance,” to be published in IEEE Robotics and Automation Letters.

Physics and modeling of negative capacitance field-effect transistors

Speaker: Yogesh Singh Chauhan, Indian Institute of Technology Kanpur (IITK), India

Time: 11:00 am - 12:00 pm Sep 18, 2018

Location: 370 Jay Seminar Room 1201, Brooklyn, NY

Abstract: The ongoing scaling of CMOS technology is now reaching its limit, due to supply voltage reduction being restricted by the subthreshold swing (SS) of 60mV/decade achievable at room temperature owing to Boltzmann transport of the charge carriers. Concept of negative capacitance proposed to achieve a sub-60mV/decade SS is currently seen as one of the potential solutions to the problem. A “negative capacitance transistor (NCFET)” employs a ferroelectric material in the gate stack of a FET providing a negative capacitance and thereby an “internal voltage amplification” at the gate of the internal FET which helps in reducing SS. Several experiments have successfully demonstrated an improved SS with the bulk MOSFET, FinFET, and 2D FETs. The improvement in subthreshold characteristics is also accompanied with the advantage of an increased ON current relative to the reference FET as has been observed both in simulation studies and experiments. In this talk, I will discuss the physics and modeling of various NCFET structures and impact of this new transistor on circuits including processors.

About the Speaker: Yogesh Singh Chauhan is an associate professor at Indian Institute of Technology Kanpur (IITK), India. He was with Semiconductor Research & Development Center at IBM Bangalore during 2007 – 2010; Tokyo Institute of Technology in 2010; University of California Berkeley during 2010-2012; and ST Microelectronics during 2003-2004. He is the developer of industry standard BSIM-BULK (formerly BSIM6) model for bulk MOSFETs and ASM-HEMT model for GaN HEMTs. His group is also involved in developing compact models for FinFET, Nanosheet/Gate-All-Around FET, FDSOI transistors, Negative Capacitance FETs and 2D FETs.

He is the Editor of IEEE Transactions on Electron Devices and Distinguished Lecturer of the IEEE Electron Devices Society. He is the member of IEEE-EDS Compact Modeling Committee and fellow of Indian National Young Academy of Science (INYAS). He is the founding chairperson of IEEE Electron Devices Society U.P. chapter and Vice-chairperson of IEEE U.P. section. He has published more than 200 papers in international journals and conferences.

He received Ramanujan fellowship in 2012, IBM faculty award in 2013 and P. K. Kelkar fellowship in 2015, CNR Rao faculty award and Humboldt fellowship in 2018. His research interests are characterization, modeling, and simulation of semiconductor devices. He has served in the technical program committees of IEEE International Electron Devices Meeting (IEDM), IEEE International Conference on Simulation of Semiconductor Processes and Devices (SISPAD), IEEE European Solid-State Device Research Conference (ESSDERC), IEEE Electron Devices Technology and Manufacturing (EDTM), and IEEE International Conference on VLSI Design and International Conference on Embedded Systems.

Imitating the Clairvoyant Oracle: From Information Gathering to Grounded Visual Navigation via Natural Language

Speaker: Debadeepta Dey, Microsoft

Time: 11:00 am - 12:00 pm Sep 25, 2018

Location: 370 Jay Seminar Room 1201, Brooklyn, NY

Abstract: In the adaptive information gathering problem, a robot is required to select an informative sensing location using the history of measurements acquired thus far. While there is an extensive amount of prior work investigating effective practical approximations using variants of Shannon’s entropy, the efficacy of such policies greatly depends on the geometric distribution of objects in the world. On the other hand, the principled approach of employing online POMDP solvers is rendered impractical by the need to explicitly sample online from a posterior distribution of world maps. We present a novel data-driven imitation learning framework to efficiently train information gathering policies. The policy imitates a clairvoyant oracle - an oracle that at training time has full knowledge about the world map and can compute maximally informative sensing locations. We analyze the learnt policy by showing that offline imitation of a clairvoyant oracle is implicitly equivalent to online oracle execution in conjunction with posterior sampling. This observation allows us to obtain powerful near-optimality guarantees for information gathering problems possessing an adaptive submodularity property. As we demonstrate on a spectrum of 2D and 3D exploration problems with aerial vehicles, the trained policies enjoy the best of both worlds - they adapt to different world map distributions while being computationally inexpensive to evaluate.

We also show in very recent work that this idea of imitating a clairvoyant oracle (in a learning-to-search framework) yields better performing policies for the task of visual navigation from natural language instructions in indoor environments. I will also provide a quick overview of ongoing exciting reinforcement and imitation learning related projects in MSR Redmond.

About the Speaker: Debadeepta Dey is a researcher in the Adaptive Systems and Interaction (ASI) group at Microsoft Research, Redmond. He received his PhD at the Robotics Institute, Carnegie Mellon University. He conducts fundamental as well as applied research at the intersection of machine learning, controls and computer vision with applications to autonomous agents in general and robotics in particular with an aim to bridge the gap between perception and action. His interests include decision-making under uncertainty, reinforcement learning, planning and perception.

Circuits: Terahertz (THz) and Beyond Seminar Series: Terahertz Communications: From Nanomaterials to Ultra-broadband Networks Frequencies

Speaker: Josep Miquel Jornet, University at Buffalo

Time: 11:00 am - 12:00 pm Sep 27, 2018

Location: 370 Jay Seminar Room 1201, Brooklyn, NY

Abstract: Wireless data traffic has grown exponentially in recent years due to a change in the way today's society creates, shares and consumes information. This change has been accompanied by an increasing demand for higher speed wireless communications, anywhere, anytime. Following the current trend, wireless Terabit-per-second (Tbps) links are expected to become a reality within the next ten years. In this context, Terahertz (THz)-band (0.1-10 THz) communication is envisioned as a key wireless technology of the next decade. The THz band will help overcome the spectrum scarcity problems and capacity limitations of current wireless networks, by providing an unprecedentedly large bandwidth. In addition, THz-band communication will enable a plethora of long-awaited applications, both at the nano-scale and at the macro-scale, ranging from wireless massive-core computing architectures and instantaneous data transfer among non-invasive nano-devices, to ultra-high-definition content streaming among mobile devices and wireless high-bandwidth secure communications. In this seminar, an in-depth view of THz-band communication networks will be provided. First, the state of the art and open challenges in the design and development of THz transceivers and antennas will be presented, with special emphasis on novel hybrid graphene/semiconductor plasmonic devices. Then, the current progress and future research directions in terms of channel modeling, physical and link layers design, will be tackled in a bottom-up approach, defining a roadmap for the development of this next frontier in wireless communication.

About the Speaker: Josep M. Jornet is an Assistant Professor in the Department of Electrical Engineering at the University at Buffalo (UB), The State University of New York. He received the B.S. in Telecommunication Engineering and the M.Sc. in Information and Communication Technologies from the Universitat Politecnica de Catalunya, Barcelona, Spain, in 2008. He received the Ph.D. degree in Electrical and Computer Engineering from the Georgia Institute of Technology (Georgia Tech), Atlanta, GA, in 2013. From September 2007 to December 2008, he was a visiting researcher at the Massachusetts Institute of Technology (MIT), Cambridge, under the MIT Sea Grant program. He was the recipient of the Oscar P. Cleaver Award for outstanding graduate students in the School of Electrical and Computer Engineering, at Georgia Tech in 2009. He also received the Broadband Wireless Networking Lab Researcher of the Year Award in 2010. In 2016, 2017 and 2018, he received the Distinguished TPC Member Award at the IEEE International Conference on Computer Communications (INFOCOM). In 2017, he received the IEEE Communications Society Young Professional Best Innovation Award, the ACM NanoCom Outstanding Milestone Award and the UB SEAS Early Career Researcher of the Year Award. In 2018, he received the UB Exceptional Scholar Award, Young Investigator Award. His current research interests are in Terahertz-band communication networks, Wireless Nano-bio-sensing Networks, and the Internet of Nano-Things. In these areas, he has co-authored more than 100 peer-reviewed scientific publications, 1 book, and has also been granted 3 US patents. Since July 2016, he is the Editor-in-Chief of the Nano Communication Networks (Elsevier) Journal and serves in the Steering Committee of the ACM/IEEE NanoCom Conference Series. He is a member of the IEEE, the ACM and the SPIE.

Circuits: Terahertz (THz) and Beyond Seminar Series: Monolithic Phased Arrays: Radiofrequencies to Optical Frequencies

Speaker: Hossein Hashemi, USC

Time: 11:00 am - 12:00 pm Oct 3, 2018

Location: 370 Jay Seminar Room 1201, Brooklyn, NY

Abstract: In 1909, accepting the Nobel Prize for Physics for “contributions to the development of wireless telegraphy”, Karl Ferdinand Braun stated “It had always seemed most desirable to me to transmit the waves, in the main, in one direction only.” He also presented a schematic of a three-antenna system that can form a desired beam in one direction. In the following decades, this simple scheme was advanced to large-scaled phased arrays used for military radars in WWII and beyond. In 1965, Gordon Moore predicted that advancement in integrated circuit technology will enable monolithic microwave phased arrays that can revolutionize radar. Nearly four decades later, monolithic microwave silicon phased arrays were reported for the first time. Today, monolithic radio frequency, microwave, and millimeter wave phased arrays are turning mainstream in commercial products including automotive radars and high speed wireless transceivers including those for the upcoming 5G wireless standards.

Shortly following the invention of laser in 1960, electronically-steerable laser beam was conceived to be useful in optical radars (nowadays known as lidars), free-space optical communications with moving transmitter and/or receiver, and projection television. While optical beam steering has been around for several decades, monolithic optical phased arrays are more recent thanks to advancements in fabrication technology. Very recently, the interest in optical beam-steering and monolithic optical phased arrays has increased thanks to the application of low-cost compact lidar in self-driving cars. Other potential application of optical phased arrays include free-space optical communications, imaging, sensing, display, and holography.

This talk will cover the basics, history, and applications of phased arrays followed by selected state-of-the-art realizations of phased array systems and related components from radio frequencies up to optical frequencies.

About the Speaker: Hossein Hashemi is a Professor of Electrical Engineering, Ming Hsieh Faculty Fellow, and the co-director of the Ming Hsieh Institute at the University of Southern California. His research interests include analog, mixed-signal, and radio-frequency integrated circuits; photonic integrated circuits; electro-optical integrated systems; and implantable integrated solutions. He received the B.S. and M.S. degrees in Electronics Engineering from the Sharif University of Technology, Tehran, Iran, in 1997 and 1999, respectively, and the M.S. and Ph.D. degrees in Electrical Engineering from the California Institute of Technology, Pasadena, in 2001 and 2003, respectively. Hossein is an Associate Editor for the IEEE Journal of Solid state Circuits (2013 – present). He was a Distinguished Lecturer for the IEEE Solid-State Circuits Society (2013 – 2014); member of the Technical Program Committee of IEEE International Solid-State Circuits Conference (ISSCC) (2011 – 2015), IEEE Radio Frequency Integrated Circuits (RFIC) Symposium (2011 – present), and the IEEE Compound Semiconductor Integrated Circuits Symposium (CSICS) (2010 – 2014); an Associate Editor for the IEEE Transactions on Circuits and Systems—Part I: Regular Papers (2006–2007) and the IEEE Transactions on Circuits and Systems—Part II: Express Briefs (2004–2005); and Guest Editor for the IEEE Journal of Solid state Circuits (Oct 2013 & Dec 2013).

Hossein was the recipient of the 2016 Nokia Bell Labs Prize, 2015 IEEE Microwave Theory and Techniques Society (MTT-S) Outstanding Young Engineer Award, 2008 Defense Advanced Research Projects Agency (DARPA) Young Faculty Award, and a National Science Foundation (NSF) CAREER Award. He received the USC Viterbi School of Engineering Junior Faculty Research Award in 2008, and was recognized as a Distinguished Scholar for the Outstanding Achievement in Advancement of Engineering by the Association of Professors and Scholars of Iranian Heritage in 2011. He was a co-recipient of the 2004 IEEE Journal of Solid-State Circuits Best Paper Award for “A Fully-Integrated 24 GHz 8-Element Phased-Array Receiver in Silicon” and the 2007 IEEE International Solid-State Circuits Conference (ISSCC) Lewis Winner Award for Outstanding Paper for “A Fully Integrated 24 GHz 4-Channel Phased-Array Transceiver in 0.13um CMOS based on a Variable Phase Ring Oscillator and PLL Architecture”.

Hossein is the co-editor of the books “Millimeter-Wave Silicon Technology: 60 GHz and Beyond” published by Springer in 2008, and “mm-Wave Silicon Power Amplifiers and Transmitters” published by the Cambridge University Press in 2016.

Brief History of Radio

Speaker: Fred Harris, UCSD

Time: 11:00 am - 12:00 pm Oct 8, 2018

Location: 2MTC Room 10.099, Brooklyn, NY

Abstract: The history of radio is a remarkable account of a disruptive technology that truly changed the world. What a remarkable idea, Communications at a distance (communicating faster than a man or woman can run). It is a concept that nearly eclipses the wonders of tomorrow foretold by the famous futurists such as H. G. Wells and Jules Verne. It is the stuff of science fiction made real! Radio and its progeny; television, satellites, cell phones, Zigbee, radar, and others, have had, and continue to have, enormous social, political, and economic impact on us all.

This presentation is a non-technical walk along a technology path with acknowledgements to familiar names of scientists, inventors, innovators, and industrialists with light reflections of their contributions to the discoveries and developments that have taken us through an unfinished journey that started 190 years ago. At one level, radio is circuits, equations, vacuum tubes, transistors, amplifiers, antennae, and modulation theory. At another level it is a fascinating record of people whose contributions have brought us the applied magic we call radio. Do the names Oersted, Faraday, Maxwell, Helmholtz, Hertz, Marconi, Poulsen, de Forest, Armstrong, Popoff, Sarnoff, and Shannon, sound familiar? Join us in this fun walk down memory lane to gently prod your recollections of these giants who have come before us.

As an aside, I am not a historian but rather a technologist. I use the history of radio in my modem design class to give students a perspective of why and how we arrived at certain design structures. I point out that design compromises made in past radios were appropriate for the time they were made but that we should not propagate these compromises to next generation radios. We have technologies and capabilities not available to our predecessors and we should use them to improve rather than to emulate previous designs. I also find that it is fun and enlightening to know the history.

About the Speaker: Professor Harris is at the University of California San Diego where he teaches and conducts research on Digital Signal Processing and Communication Systems. He holds 40 patents on digital receiver and DSP technology and lectures throughout the world on DSP applications. He consults for organizations requiring high performance, cost effective DSP solutions.

He has written some 260 journal and conference papers, the most well-known being his 1978 paper “On the use of Windows for Harmonic Analysis with the Discrete Fourier Transform”. He is the author of the book Multirate Signal Processing for Communication Systems and has contributed to several other DSP books. His special areas include Polyphase Filter Banks, Physical Layer Modem design, and Synchronizing Digital Modems.

He was the Technical and General Chair respectively of the 1990 and 1991 Asilomar Conference on Signals, Systems, and Computers, was Technical Chair of the 2003 Software Defined Radio Conference, of the 2006 Wireless Personal Multimedia Conference, of the DSP-2009, DSP-2013 Conferences and of the SDR-WinnComm 2015 Conference. He became a Fellow of the IEEE in 2003, cited for contributions of DSP to communications systems. In 2006 he received the Software Defined Radio Forum’s “Industry Achievement Award”. He was recently notified that in Shanghai, this coming October 2018, he will receive the DSP-2018 conference’s commemorative plaque with the citation: We wish to recognize and pay tribute to Fred Harris for his pioneering contributions to digital signal processing algorithmic design and implementation, and his visionary and distinguished service to the Signal Processing Community. The spelling of his name with all lower case letters is a source of distress for typists and spell checkers. A child at heart, he collects toy trains and old slide-rules.

Modern Artificial Intelligence Seminar Series: The AI Trinity: Data + Algorithms + Infrastructure

Speaker: Anima Anandkumar, Caltech

Time: 11:00 am - 12:00 pm Oct 9, 2018

Location: 370 Jay Seminar Room 1201, Brooklyn, NY

Abstract: AI at scale requires a perfect storm of data, algorithms and cloud infrastructure. Modern deep learning has relied on large labeled datasets for training. However, such datasets are not easily available in all domains, and are expensive/difficult to collect. By building intelligence into data collection and aggregation, we can drastically reduce data requirements. Additionally, algorithmic and infrastructure innovations now make it possible to train models at scale. In future, we will see more integration of these three pillars to advance AI.

About the Speaker: Anima Anandkumar is a Bren professor at Computing + Mathematical sciences department at Caltech. Anima Anandkumar's research interests span theory and practice of large-scale machine learning. In particular, she has been spearheading the development and analysis of tensor algorithms for machine learning. She is the recipient of several awards such as the Bren endowed chair professorship at Caltech, Alfred. P. Sloan Fellowship, Microsoft Faculty Fellowship, Google research award, ARO and AFOSR Young Investigator Awards, NSF Career Award, and several best paper awards. She received her B.Tech in Electrical Engineering from IIT Madras in 2004 and her PhD from Cornell University in 2009. She was a postdoctoral researcher at MIT from 2009 to 2010, an assistant professor at U.C. Irvine between 2010 and 2016, a visiting researcher at Microsoft Research New England in 2012 and 2014, and a Principal Scientist at Amazon Web Services between 2016-2018.

Dean's Lecture: The Era of Artificial Intelligence

Speaker: Kai-Fu Lee, Chairman and CEO of Sinovation Ventures

Time: 1:00 pm - 3:00 pm Oct 9, 2018

Location: 370 Jay, Seminar Room 202, Brooklyn, NY

Abstract: In this talk, I will talk about the four waves of Artificial Intelligence (AI) , and how AI will permeate every part of our lives in the next decade. I will also talk about how this will be different from previous technology revolutions -- it will be faster and be driven by not one superpower, but two (US and China). AI will add $16 trillion to our global GDP, but also cause many challenges that will be hard to solve. I will talk in particular about AI replacing routine jobs -- the consequences, the proposed solutions that don't work (such as UBI), and end with a blueprint of co-existence between humans and AI.

About the Speaker: Dr. Kai-Fu Lee is the Chairman and CEO of Sinovation Ventures ( www.sinovationventures.com) and President of Sinovation Venture’s Artificial Intelligence Institute. Sinovation Ventures, managing US$1.7 billion dual currency investment funds, is a leading venture capital firm focusing on developing the next generation of Chinese high-tech companies. Prior to founding Sinovation in 2009, Dr. Lee was the President of Google China. Previously, he held executive positions at Microsoft, SGI, and Apple. Dr. Lee received his Bachelor degree from Computer Science from Columbia University, Ph.D. from Carnegie Mellon University, as well as Honorary Doctorate Degrees from both Carnegie Mellon and the City University of Hong Kong. He was named one of the most influential 100 people in the world by Time in 2013. He is also a Fellow of the Institute of Electrical and Electronics Engineers (IEEE), and followed by over 50 million audience on social media.

In the field of artificial intelligence, Dr. Lee built one of the first game playing programs to defeat a world champion (1988, Othello), as well as the world’s first large-vocabulary, speaker-independent continuous speech recognition system. Dr. Lee founded Microsoft Research China, which was named as the hottest research lab by MIT Technology Review. Later renamed Microsoft Research Asia, this institute trained the great majority of AI leaders in China, including CTOs or AI heads at Baidu, Tencent, Alibaba, Lenovo, Huawei, and Haier. While with Apple, Dr. Lee led AI projects in speech and natural language, which have been featured on Good Morning America on ABC Television and the front page of Wall Street Journal. He has authored 10 U.S. patents, and more than 100 journal and conference papers. Altogether, Dr. Lee has been in artificial intelligence research, development, and investment for more than 30 years. His latest book AI Superpowers (aisuperpowers.com) releasing fall 2018 discusses US-China co-leadership in the age of AI as well as the greater societal impacts brought upon by the AI technology revolution.

Circuits: Terahertz (THz) and Beyond Seminar Series: Wireless above 100 GHz

Speaker: Mark Rodwell, University of California, Santa Barbara

Time: 11:00 am - 12:00 pm Oct 11, 2018

Location: 370 Jay, Seminar Room 202, Brooklyn, NY

Abstract: With the RF bands below ~5GHz soon to be exhausted, industry is poised to move to 5G systems, with carriers at 28, 38, 57-71(WiGig), and 71-86GHz. Research now explores the next generation of wireless systems, these operating between 100-340GHz. Such systems can support massive spatial multiplexing in both endpoint and backhaul links, and will require high-frequency transistors in VLSI and in III-V technologies, phased-array transceiver front-ends, and complex silicon RF ICs to form and aim multiple beams and to null or equalize multipath interference. We will summarize THz transistor design, IC development from 100-1000 GHz, and array and system design.

About the Speaker: Mark Rodwell (Ph.D. Stanford University 1988) holds the Doluca Family Endowed Chair in Electrical and Computer Engineering at UCSB. He directs the SRC/DARPA Center for Converged TeraHertz Communications and Sensing. His research group develops nm and THz transistors, and mm-wave and sub-mm-wave integrated circuits and systems. The work of his group and collaborators has been recognized by the 2010 IEEE Sarnoff Award, the 2012 IEEE Marconi Prize Paper Award, the 1997 IEEE Microwave Prize, the 1998 European Microwave Conference Microwave Prize, and the 2009 IEEE IPRM Conference Award.

NYU Abu Dhabi ECE Faculty Candidate Seminar: Machine Learning and Swarm Decomposition: New approaches of Human Behavioral Modeling in Bullying, Depression, Parkinson’s early detection and Baby Crying Translation

Speaker: Leontios J. Hadjileontiadis, NYUAD

Time: 11:00 am - 12:00 pm Oct 15, 2018

Location: 2 MTC Room 10.099, Brooklyn, NY

Abstract: Machine Learning (ML) is a branch of artificial intelligence based on the idea that systems can learn from data, identify patterns and make decisions with minimal human intervention. While many ML algorithms have been around for a long time, the ability to automatically apply complex mathematical calculations to big data – over and over, faster and faster, deeper and deeper – is a recent development, leading to the realization of the so called Deep Learning (DL). The latter has an intuitive capability that is similar to biological brains. It is able to handle the inherent unpredictability and fuzziness of the natural world. In this seminar, the main aspects of ML and DL will be presented and the focus will be placed in the way they are used to shed light upon the Human Behavioral Modeling. In this effort, a new data decomposition approach based on swarm analysis will be presented and its combination with DL will be explored for the case of brain responses to bullying incidence stimuli within immersive environments. In addition, ML-based approaches will be presented for identifying fine-motor skills deterioration due to early Parkinson’s and depression symptoms reflected in the keystroke dynamics, while interacting with a smartphone, along with baby crying translation via ML-based pattern recognition. Finally, a wider perspective of the current research and future plans will conclude the seminar.

About the Speaker: Leontios J. Hadjileontiadis (IEEE S’87–M’98–SM’11) was born in Kastoria, Greece, in π-1966. He received the Diploma Degree in Electrical Engineering in 1989 and the Ph.D. Degree in Electrical and Computer Engineering in 1997, both from the Aristotle University of Thessaloniki (AUTH), Thessaloniki, Greece. He also received a Diploma Degree in Musicology, AUTH, in 2011, and the Ph.D. Degree in Music Composition from the University of York, York, U.K., in 2004. His research interests include advanced signal processing, machine learning, biomedical engineering, affective computing and active and healthy ageing. His publication record includes >100 papers in peer reviewed international journals, >150 papers in peer reviewed international conference proceedings, 6 books, 2 books edited, and 24 book chapters (Detail Click Here). He has a vast experience in project management, coordinating so far European and UAE projects of >US$6.000.000. Prof. Hadjileontiadis has been awarded, amongst other awards, as innovative researcher and champion faculty from Microsoft, USA (2012) and the Silver Award in Teaching Delivery at the Reimagine Education Awards (2017-2018). Just recently (July 2018), he received with his student team the 2 nd Award in the world finals of Microsoft Imagine Cup 2018 (Seattle, USA) for the iCry2Talk project. He is a Senior Member of IEEE. Moreover, he has written more than 90 compositions for various musical genres, performed worldwide, and awarded ten times. He is a Professor of Composition at the State Conservatory of Thessaloniki and the Vice President of the Greek Composers’ Union. His most recent compositional works refer to the field of biomusic, employing real-time biosignals (EEG, HR, gestures) combined with stochastic modeling in the compositional process (Detail Click Here)

Ernst Weber Lecture: The Evolution of Public Key Cryptography

Speaker: Martin Hellman, Stanford

Time: 11:00 am - 12:00 pm Oct 23, 2018

Location: 370 Jay Room 201, Brooklyn, NY

Abstract: While public key cryptography is seen as revolutionary, after this talk you might wonder why it took Whit Diffie, Ralph Merkle and Hellman so long to discover it. This talk also highlights the contributions of some unsung (or “under-sung”) heroes: Ralph Merkle, John Gill, Stephen Pohlig, Richard Schroeppel, Loren Kohnfelder, and researchers at GCHQ (Ellis, Cocks, and Williamson).

About the Speaker: Martin Hellman is best known for inventing public key cryptography, the technology that enables secure Internet transactions. He also has contributed to the computer privacy debate, and was a key participant in the “first crypto war” of the late 1970s. He has authored over seventy technical papers, twelve US patents and a number of foreign equivalents. His many honors include election to the National Academy of Engineering and receiving (jointly with his colleague Whit Diffie) the million dollar ACM Turing Award, the top prize in computer science.

Modern Artificial Intelligence Seminar Series: The Blessings of Multiple Causes

Speaker: David Blei, Columbia University

Time: 11:00 am - 12:00 pm Oct 29, 2018

Location: 370 Jay Seminar Room 201, Brooklyn, NY

Abstract: Causal inference from observational data is a vital problem, but it comes with strong assumptions. Most methods require that we observe all confounders, variables that correlate to both the causal variables (the treatment) and the effect of those variables (how well the treatment works). But whether we have observed all confounders is a famously untestable assumption. We describe the deconfounder, a way to do causal inference from observational data with weaker assumptions that the classical methods require.

How does the deconfounder work? While traditional causal methods measure the effect of a single cause on an outcome, many modern scientific studies involve multiple causes, different variables whose effects are simultaneously of interest. The deconfounder uses the multiple causes as a signal for unobserved confounders, combining unsupervised machine learning and predictive model checking to perform causal inference.

We describe the theoretical requirements for the deconfounder to provide unbiased causal estimates, and show that it requires weaker assumptions than classical causal inference. We analyze the deconfounder's performance in three types of studies: semi- simulated data around smoking and lung cancer, semi-simulated data around genomewide association studies, and a real dataset about actors and movie revenue. The deconfounder provides a checkable approach to estimating close-to-truth causal effects. This is joint work with Yixin Wang (Full Paper).

About the Speaker: David Blei is a Professor of Statistics and Computer Science at Columbia University, and a member of the Columbia Data Science Institute. His research is in statistical machine learning, involving probabilistic topic modeling, scalable Bayesian algorithms, and interpretable generative models. He works on a variety of applications, using data from text, images, medicine, the social sciences, and the natural sciences. David has received several awards for his research, including a Sloan Fellowship (2010), Office of Naval Research Young Investigator Award (2011), Presidential Early Career Award for Scientists and Engineers (2011), Blavatnik Faculty Award (2013), ACM-Infosys Foundation Award (2013), and a Guggenheim fellowship (2017). He is the co-editor-in-chief of the Journal of Machine Learning Research. He is a fellow of the ACM and the IMS.

ECE Special Seminar Series: Joy Laskar: Solving the 5G Data Transport Layer Problem with mmW CMOS Products

Speaker: Joy Laskar, Maja Systems, Milpitas, CA

Time: 11:00 am - 12:00 pm Nov 2, 2018

Location: 370 Jay Seminar Room 1201, Brooklyn, NY

Abstract: Since the first demonstration of mmW wireless connectivity in 1895, there has been much interest and promise in the future of mmW gigabit wireless technology. It has been only recently, with the emergence of CMOS based technology and its capacity for low-cost monolithic single-chip integration that one can envision a new class of systems and applications for low delay and high throughput connectivity, which forms the foundation for the 5G revolution. In this presentation, we focus on recent breakthroughs at Maja Systems with its Air Data TM product line enabling customers for data center, autonomous vehicles, enterprise connectivity, and mobile 5G.

About the Speaker: Joy Laskar received his B.Sc. in Computer Engineering (with Physics and Math Minors) from Clemson University and the M.Sc. and Ph.D. degrees in Electrical Engineering from the University of Illinois at Urbana-Champaign. Dr. Laskar is a co- Founder and CTO/SVP for Maja Systems. Dr. Laskar’s technical expertise contributions are at the intersection of Radio Frequency Electronics, Analog Electronics and Electromagnetics. Dr. Laskar has co-founded 5 companies, co-authored 5 textbooks, published more than 600 peer-reviewed journal and conference papers, 60 patents (issued or pending) and graduated 41 Ph.D. students (while holding various tenured faculty positions at the University of Hawaii and Georgia Tech). Maja Systems has helped pioneer the development of low power millimeter wave gigabit wireless products for Enterprise Connectivity, Data Center, Automotive and emerging 5G platforms. Dr. Laskar is an IEEE Fellow and a member of the IEEE Access Editorial Board.

When Can Machine Learning Be Useful for Communication Systems?

Speaker: Osvaldo Simeone, King's College London, UK.

Time: 2:00 pm - 3:00 pm Nov 2, 2018

Location: 2 MetroTechCenter, 10.099 Conference Room, Brooklyn, NY

Abstract: Given the unprecedented availability of data and computing resources, there is widespread renewed interest in applying data-driven machine learning methods to problems in which the deployment of conventional engineering solutions is challenged by modeling or algorithmic deficiencies. The talk starts by addressing the questions of why and when such techniques can be useful. Then, some exemplifying applications to communication networks are discussed by distinguishing tasks carried out at the edge and at the cloud of the network at different layers of the protocol stack.

About the Speaker: Professor Osvaldo Simeone received an M.Sc. degree (with honors) and a Ph.D. degree in information engineering from Politecnico di Milano, Milan, Italy, in 2001 and 2005, respectively. From 2006 to 2017, he was a faculty member of the Electrical and Computer Engineering (ECE) Department at New Jersey Institute of Technology (NJIT), where he was affiliated with the Center for Wireless Information Processing (CWiP). Currently he is a Professor of Information Engineering with the Centre for Telecommunications Research at the Department of Informatics of King's College London. His research interests include wireless communications, information theory, optimization and machine learning. Dr Simeone is a co-recipient of the 2017 JCN Best Paper Award, the 2015 IEEE Communication Society Best Tutorial Paper Award and of the Best Paper Awards of IEEE SPAWC 2007 and IEEE WRECOM 2007. He was awarded a Consolidator grant by the European Research Council (ERC) in 2016. His research has been supported by the U.S. NSF, the ERC, the Vienna Science and Technology Fund, as well as by a number of industrial collaborations. He currently serves in the editorial board of the IEEE Signal Processing Magazine, and he is a Distinguished Lecturer of the IEEE Information Theory Society. Dr Simeone is a co-author of two monographs, an edited book published by Cambridge University Press, and more than one hundred research journal papers. He is a Fellow of the IET and of the IEEE.

Circuits: Terahertz (THz) and Beyond Seminar Series: Enabling the Third Wireless Revolution through Transformative RF/mmWave Circuits, Wireless Systems and Sensing Paradigms

Speaker: Harish Krishnaswamy, Columbia University

Time: 11:00 am - 12:00 pm Nov 7, 2018

Location: 370 Jay Seminar Room 1201, Brooklyn, NY

Abstract: Over the past 30 years, we have reaped the benefits of two wireless communication revolutions, which have had significant social and economic impact. The period from 1990-2000 saw the mobile wireless communication revolution, as cellular mobile telephony enabled human beings across the globe to be instantly connected with each other. The period from 2000 to the present day is witness to the mobile wireless data revolution, as 3G and 4G networks have brought the Internet to our fingertips. However, as massive as the amount of data that exists on the Internet is, it pales in comparison to the amount of data inherently present in the constitution of our physical world. The next wireless revolution will be the mobile wireless-reality revolution, which will bring the physical world to our fingertips. RF, mmWave and terahertz communication, imaging and sensing devices will enable us to interrogate the physical world and create virtual or augmented worlds in ways that exceed and augment human sensory situational awareness. The wireless-reality revolution will require a quantum leap forward in our ability to control and manipulate the RF-to- THz electromagnetic spectrum, and in our ability to transmit, acquire, aggregate and process the associated data.

I will describe recent research on high-power and energy-efficient millimeter-wave power amplifiers, transmitters and large-scale phased arrays that have drawn interest for next-generation 5G cellular networks. I will also describe recent work on extreme-bandwidth (>20Gbps) communication links at millimeter-waves for applications such as virtual and augmented reality. I will also briefly cover other novel wireless communication paradigms, including massive MIMO and full-duplex wireless, that enable extremely-high spectral efficiencies and data rates at lower RF frequencies. I will also talk about ongoing efforts towards the realization of city-scale testbeds that deploy advanced wireless hardware supporting mmWave, massive MIMO and full-duplex operation, enabling higher-layer systems research for the first time at city scales. Finally, I will touch upon new ideas on how to converge communications, imaging and sensing, enabling city-scale communication infrastructure to be repurposed for city-scale imaging.

About the Speaker: Harish Krishnaswamy (S’03–M’09) received the B.Tech. degree in electrical engineering from IIT Madras, Chennai, India, in 2001, and the M.S. and Ph.D. degrees in electrical engineering from the University of Southern California (USC), Los Angeles, CA, USA, in 2003 and 2009, respectively. In 2009, he joined the Electrical Engineering Department, Columbia University, New York, NY, USA, where he is currently an Associate Professor and the Director of the Columbia High-Speed and Millimeter-Wave IC Laboratory (CoSMIC).

In 2017, he co-founded MixComm Inc., a venture-backed startup, to commercialize CoSMIC Laboratory’s advanced wireless research. His current research interests include integrated devices, circuits, and systems for a variety of RF, mmWave, and sub-mmWave applications.

Dr. Krishnaswamy was a recipient of the IEEE International Solid-State Circuits Conference Lewis Winner Award for Outstanding Paper in 2007, the Best Thesis in Experimental Research Award from the USC Viterbi School of Engineering in 2009, the Defense Advanced Research Projects Agency Young Faculty Award in 2011, the 2014 IBM Faculty Award, the Best Demo Award at the 2017 IEEE ISSCC, and Best Student Paper Awards (First Place) at the 2015 and 2018 IEEE Radio Frequency Integrated Circuits Symposium . He has been a member of the technical program committee of several conferences, including the IEEE International Solid-State Circuits Conference since 2015 and the IEEE Radio Frequency Integrated Circuits Symposium since 2013. He currently serves as a Distinguished Lecturer for the IEEE Solid-State Circuits Society and as a member of the DARPA Microelectronics Exploratory Council.

Jack Keil Wolf Lecture Series: Guessing Random Additive Noise Decoding (GRAND)

Speaker: Muriel Medard, MIT

Time: 11:00 am - 12:00 pm Nov 8, 2018

Location: 370 Jay Room 1201, Brooklyn, NY

Abstract: We introduce a new algorithm for Maximum Likelihood (ML) decoding based on guessing noise. The algorithm is based on the principle that the receiver rank orders noise sequences from most likely to least likely. Subtracting noise from the received signal in that order, the first instance that results in an element of the code-book is the ML decoding. For common additive noise channels, we establish that the algorithm is capacity achieving for uniformly selected code-books, providing an intuitive alternate approach to the channel coding theorem. When the code-book rate is less than capacity, we identify exact asymptotic error exponents as the block-length becomes large. We illustrate the practical usefulness of our approach in terms of speeding up decoding for existing codes.

Joint work with Ken Duffy, Kishori Konwar, Jiange Li, Prakash Narayana Moorthy, Amit Solomon.

About the Speaker: Muriel Medard is the Cecil H. Green Professor in the Electrical Engineering and Computer Science (EECS) Department at MIT and leads the Network Coding and Reliable Communications Group at the Research Laboratory for Electronics at MIT. She has co-founded three companies to commercialize network coding, CodeOn, Steinwurf and Chocolate Cloud. She has served as editor for many publications of the Institute of Electrical and Electronics Engineers (IEEE), of which she was elected Fellow, and she has served as Editor in Chief of the IEEE Journal on Selected Areas in Communications. She was President of the IEEE Information Theory Society in 2012, and served on its board of governors for eleven years. She has served as technical program committee co-chair of many of the major conferences in information theory, communications and networking. She received the 2009 IEEE Communication Society and Information Theory Society Joint Paper Award, the 2009 William R. Bennett Prize in the Field of Communications Networking, the 2002 IEEE Leon K. Kirchmayer Prize Paper Award, the 2018 ACM SIGCOMM Test of Time Paper Award and several conference paper awards. She was co-winner of the MIT 2004 Harold E. Edgerton Faculty Achievement Award, received the 2013 EECS Graduate Student Association Mentor Award and served as Housemaster for seven years. In 2007 she was named a Gilbreth Lecturer by the U.S. National Academy of Engineering. She received the 2016 IEEE Vehicular Technology James Evans Avant Garde Award, the 2017 Aaron Wyner Distinguished Service Award from the IEEE Information Theory Society and the 2017 IEEE Communications Society Edwin Howard Armstrong Achievement Award.

Information and Uncertainty in Learning

Speaker: Meir Feder, Tel-Aviv University, Israel

Time: 11:00 am - 12:00 pm, Nov 9, 2018

Location: 2MTC Room 10.099, Brooklyn, NY

Abstract: An information theoretical approach to learning is presented following the universal prediction/universal coding paradigms developed in the 90's. This approach leads to learning schemes that are more stable and have precise optimality criteria, as compared to the standard approach based on empirical risk minimization (ERM) and stochastic gradient (SGD). The comparison and advantages are shown on a variety of learning models from basic linear regression to deep neural networks (DNN), demonstrating the utility of proper information extraction from the training data to better learning. One of the main strengths of this approach is a measure of learnability or uncertainty in the predictive power of learning. The approach provides an information theoretic learnability measure that depends on the specific training examples and the specific test features. We demonstrate how this measure let the learner know when it does not know. The main results presented are for supervised learning. Towards the end of the talk we will also discuss unsupervised learning, active learning, model class selection and the ability to use large or union of model classes in a hierarchical universality fashion.

About the Speaker: Meir Feder is a Chaired Professor (Information Theory Chair) at the School of Electrical Engineering, Tel-Aviv University. He holds an Sc.D. degree from the Massachusetts Institute of Technology (MIT) was a visiting Professor in MIT, and had visiting positions at Bell laboratories and Scripps Institute of Oceanography. He is an IEEE Fellow, and received several academic awards including the IEEE Information Theory society best paper award.

During his academic career, Prof. Feder was closely involved in the high-tech industry with numerous companies, including working with Intel on the MMX architecture and designing efficient multimedia algorithms for it. In 1998 he co-founded Peach Networks, a provider of server-based interactive TV system via the cable network, acquired in 2000 by Microsoft. He then co-founded Bandwiz, a provider of massive content delivery systems for enterprise networks. In 2004 he co-founded Amimon, a leading provider of low latency, perfect quality wireless high-definition A/V connectivity for consumer and professional market. This year he co-founded Run.ai, to enable efficient, highly distributed, deep learning solutions in the cloud.

Circuits: Terahertz (THz) and Beyond Seminar Series: Channel measurements above 100 GHz

Speaker: Daniel M. Mittleman, Brown University

Time: Nov 15, 2018

Location: 370 Jay Room 1201 Auditorium, Brooklyn, NY

Abstract: To accommodate the rapid increase in global wireless traffic, which will reach to 49 exabytes per month by 2021, wireless networks operating beyond 95 GHz will be required. The operation and characteristics of such networks are quite different from those of conventional wireless systems, or even of 5G systems which will employ millimeter-wave links at lower frequencies. The distinctions arise from the much shorter wavelength, which implies both a significantly higher directionality and a very different propagation and diffraction characteristic. This offers both challenges and opportunities for future networks operating at these frequencies. In this presentation, we discuss several new measurements to characterize aspects of these high-frequency channels, including non-line-of-sight links. A first study of the implications of high directionality on eavedropping and physical-layer security will also be described.

About the Speaker: Dr. Mittleman received his B.S. in physics from the Massachusetts Institute of Technology in 1988, and his M.S. in 1990 and Ph.D. in 1994, both in physics from the University of California, Berkeley, under the direction of Dr. Charles Shank. He then joined AT&T Bell Laboratories as a post-doctoral member of the technical staff, working first for Dr. Richard Freeman on a terawatt laser system, and then for Dr. Martin Nuss on terahertz spectroscopy and imaging. Dr. Mittleman joined the ECE Department at Rice University in September 1996. In 2015, he moved to the School of Engineering at Brown University. His research interests involve the science and technology of terahertz radiation. He is a Fellow of the OSA, the APS, and the IEEE, and is a 2018 recipient of the Humboldt Research Award. He currently serving a three-year term as Chair of the International Society for Infrared Millimeter and Terahertz Waves.

Learning to Control Complex Dynamical Systems

Speaker: Vikas Sindhwani, Google Brain, NYC

Time: 11:00am - 12:00pm Nov 27, 2018

Location: 370 Jay, Room 1201, Brooklyn, NY

Abstract: With rapid advances in machine learning and perception, it is no longer far fetched to imagine a world -- in not too distant future -- with self-driving cars on roads, autonomous drones in the sky and perhaps even mobile manipulators in our kitchens. In this talk, I will share a few vignettes of Robotics research at Google at the intersection of machine learning and control. Topics include non-smooth trajectory optimization, geometric reasoning in 3D environments, inferring stable vector fields for imitation learning and model-based control, and data-efficient "blackbox" policy optimization.His publications are available at:vikas.sindhwani.org.

About the Speaker: Vikas Sindhwani is Research Scientist in the Google Brain team in New York where he leads a research group focused on solving a range of perception, learning and control problems arising in Robotics. His interests are broadly in core mathematical foundations of statistical learning, and in end-to-end design aspects of building large-scale, robust machine intelligence systems. He received the best paper award at Uncertainty in Artificial Intelligence (UAI) 2013, the IBM Pat Goldberg Memorial Award in 2014, and was co-winner of the Knowledge Discovery and Data Mining (KDD) Cup in 2009. He serves on the editorial board of IEEE Transactions on Pattern Analysis and Machine Intelligence, and has been area chair and senior program committee member for International Conference on Learning Representations (ICLR) and Knowedge Discovery and Data Mining (KDD). He previously led a team of researchers in the Machine Learning group at IBM Research, NY. He has a PhD in Computer Science from the University of Chicago and a B.Tech in Engineering Physics from Indian Institute of Technology (IIT) Mumbai.

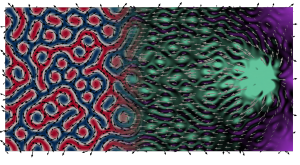

Reservoir Computing with Random Skyrmion Fabrics

Speaker: Daniele Pinna, Johannes Gutenberg University, Germany

Time: 3:00pm - 4:00pm Nov 27, 2018

Location: 2MTC 10.099, Brooklyn, NY

Abstract: The topologically protected magnetic spin configurations known as skyrmions offer promising applications due to their stability, mobility and localization. Thanks to their many nanoscale properties, skyrmions have been shown to be promising in many applications ranging from non-volatile memory and spintronic logic devices, to enabling the implementation of unconventional computational standards such as Stochastic computing and Reservoir Computing. Particularly, Reservoir Computing is a type of recursive neural network commonly used for recognizing and predicting spatio-temporal events. Its basic functioning does not require any knowledge of the reservoir topology or node weights for training purposes and can therefore utilize naturally existing networks formed by a wide variety of physical processes.

In this talk we will discuss how a random skyrmion``fabric'' composed of skyrmion clusters embedded in a magnetic substrate can be effectively employed to implement a functional reservoir (see Figure). This is achieved by leveraging the nonlinear resistive response of the individual skyrmions arising from their current dependent anisotropic magneto-resistance effect (AMR). Complex time-varying current signals injected via contacts into the magnetic substrate are shown to be modulated nonlinearly by the fabric's AMR due to the current distribution following paths of least resistance as it traverses the geometry. By tracking resistances across multiple input and output contacts, we show how the instantaneous current distribution effectively carries temporally correlated information about the injected signal. This in turn allows us to numerically demonstrate simple pattern recognition. We argue that the fundamental ingredients for such a device to work are threefold: i) Concurrent probing of the magnetic state; ii) stable ground state when forcings are removed; iii) nonlinear response to input forcing. Whereas we demonstrate this by employing skyrmion fabrics, the basic ingredients should be general enough to spur the interest of the greater magnetism and magnetic materials community to explore novel reservoir computing systems.

About the Speaker: Thermal noise is typically regarded as nuisance when designing new device technologies. More so in nanomagnetic applications where it can lead to degraded device performance through device volatility and memory loss. In precise circumstances, however, the random variability guaranteed by thermal effects can be harnessed to enable useful device behavior at high energy efficiency and low power consumption. Thermal assistance has been known to guide phenomena such as brownian ratchets and stochastic resonance. From a dynamical systems perspective, this takes place when the physical system being studied either lacks detailed balance or is tuned periodically on timescales comparable with thermal fluctuation frequencies.My research focuses both on modelling the thermal phenomena affecting spintronically driven nanomagnet dynamics and assessing how it can be controlled in the design of magnetic structures to implement novel computational tasks. As an example, the scalability and easy tunability of magnetic heterostructures make them ideal candidates for constructing dense ensembles of magnetically and electrically coupled devices. Similarly, stable magnetic solitons living on extended thin films (such as skyrmions) can serve as extremely compact nonlinear elements. Both of these properties are fundamental requirements for future analog devices capable of enacting the operating principles of neural networks and reservoir computers. My work uses both numerical micromagnetic techniques and theoretical insight to grapple underlying principles of the phenomenas I study and implement valid proof-of-concepts for real world applications.

Circuits: Terahertz (THz) and Beyond Seminar Series: Out of Many, Many: The Path towards Scalable, Integrated, mm-Wave MIMO Arrays

Speaker: Arun Natarajan, Oregon State University

Time: 11:00 am - 12:00 pm Dec 6, 2018

Location: 370 Jay Street, Room 1201 Auditorium, Brooklyn, NY

Abstract: Wireless data usage is doubling every year and network providers are projecting demand approaching 1Gb/user/day, necessitating a 1000x increase in network capacity and 100x increase in worst-case data rates with perhaps only 2x increase in available spectrum at frequencies below 3GHz. Future cellular wireless networks will evolve to higher center frequencies that provide larger available spectrum and to ultra-dense multi-user MIMO (MU-MIMO) with heterogenous cell size (macro, pico, femto) for increased spatial spectrum reuse. Therefore, scalable RF and mm-wave transceivers with 10 (user terminal) to 1000 (base station) elements operating in ad- hoc frequency-reuse scenarios are the future paradigm for wireless transceivers. Over the last 15 years, the feasibility of integrating multiple transceivers in silicon to achieve multiple-input single-output phased-array operation has been extensively demonstrated. However, MIMO transceivers that preserve all spatial degrees of freedom promise much higher data rates and support for digital multi-beam forming. Building such scalable digital-intensive MIMO radios will require energy-efficient scalable RF/mm-wave interfaces, interferer tolerance and scalable array synchronization. In this talk, I will explore these challenges and present our research on scalable efficient mm-wave antenna-IC interfaces, reconfigurable MIMO spectral/spatial/code- domain filtering and array-level clock synchronization, focusing on architectures/circuits that potentially improve upon simple scaling of single-element transceivers for MIMO arrays.

About the Speaker: Dr. Natarajan’s research is focused on mm-wave and sub-mmwave integrated circuits and systems for high-speed wireless communication and imaging. He received the B.Tech. degree in electrical engineering from the Indian Institute of Technology, Madras, in 2001 and the M.S. and Ph.D. degrees in electrical engineering from the California Institute of Technology (Caltech), Pasadena, in 2003 and 2007, respectively. From 2007 to 2012, he was a Research Staff Member at IBM T. J. Watson Research Center, NY and worked on mm-wave phased arrays for multi-Gb /s data links and airborne radar and on self-healing circuits for increased yield in sub-micron process technologies. Dr. Natarajan received the National Talent Search Scholarship from the Government of India [1995-2000], the Caltech Atwood Fellowship in 2001, the Analog Devices Outstanding Student IC Designer Award in 2004, and the IBM Research Fellowship in 2005, and serves on the Technical Program Committee of the IEEE Radio-Frequency Integrated Circuits (RFIC) Conference.

Modern Artificial Intelligence Seminar Series: The Path to the Nobel Prize

Speaker: Richard J. Roberts, New England Biolabs, Inc.

Time: 11:00 am - 12:00 pm Dec 11, 2018

Location: 370 Jay Street, Room 1201 Auditorium, Brooklyn, NY

Abstract: In this talk I will briefly describe how I became interested in science and how I almost became a professional billiards player. Following my early interests in chemistry, and my pursuit of a Ph.D. in chemistry I became fascinated with biology and read a book, “The Thread of Life” by John Kendrew that led to my becoming a molecular biologist. I will describe the research that led to the discovery of RNA splicing, which turned out to be a temporary diversion from my real interests in DNA restriction and modification. With a keen interest in sequencing DNA I became heavily involved in using computers and was a pioneer in what is now called bioinformatics. In the RM field many discoveries have been made including, most recently, some exciting findings on bacterial methylomes.

My career has spanned traditional academic research to more commercially inspired ventures. Since I now work at New England Biolabs, a for-profit company, I will describe its origins, its philosophy towards business and life, and how commercial success can fund innovative research. One theme running through my career has been a lack of respect for dogma and a keen sense of questioning everything that people tell me they already know.

About the Speaker: Dr. Richard J. Roberts is the Chief Scientific Officer at New England Biolabs, Beverly, Massachusetts. He received a Ph.D. in Organic Chemistry in 1968 from Sheffield University and then moved as a postdoctoral fellow to Harvard. From 1972 to 1992, he worked at Cold Spring Harbor Laboratory, eventually becoming Assistant Director for Research under Dr. J.D. Watson. He began work on the newly discovered Type II restriction enzymes in 1972 and these enzymes have been a major research theme. Studies of transcription in Adenovirus-2 led to the discovery of split genes and mRNA splicing in 1977, for which he received the Nobel Prize in Medicine in 1993. During the sequencing of the Adenovirus-2 genome computational tools became essential and his laboratory pioneered the application of computers in this area. DNA methyltransferases, as components of restriction-modification systems are also of active interest and the first crystal structures for the HhaI methyltransferase led to the discovery of base flipping. Bioinformatic studies of microbial genomes to find new restriction systems are a major research focus as is the elucidation of DNA methyltransferase recognition sequences using SMRT sequencing and a new approach to m5C containing recognition sequences.

ECE Special Seminar Series: Research Challenges in High Performance Integrated Systems

Speaker: Eby G. Friedman, University of Rochester

Time: 11:00 am - 12:00 pm, Dec 13, 2018

Location: 5MTC, LC400, Brooklyn, NY

Abstract: The intention of this presentation is to provide an overview of the different areas of active research focus in the high performance integrated circuit design laboratory at the University of Rochester. Each of these topics considers different aspects of the systems integration process, with a focus on physical and circuit level aspects. Emphasis is placed on those fundamental challenges in delivering performance to high speed, high complexity heterogeneous integrated systems. Technologies range from deeply scaled CMOS to emerging devices and circuits such as spintronic, photonic, and superconductive behaviors to systems platforms such as three- dimensional integration.

Delivering high quality power to on-chip circuitry with minimum energy loss is a fundamental objective of all modern integrated circuits. Power conversion and regulation resources need to be efficiently managed to supply high quality power with minimum energy loss within multiple on-chip power domains. An integrated system of hundreds of power regulators with many millions of decoupling capacitors is required, distributing the power locally to many billions of on-chip loads. Circuits, algorithms, and design methodologies are being developed to fundamentally change the manner in which power is delivered through the package and on-chip.

Three-dimensional (3-D) integration is changing the path for device scaling, supporting the delivery of multi-faceted heterogeneous systems. Techniques and methodologies are under development to better design, model, architect, and build 3-D systems. Several test circuits have also been developed to evaluate some of the key issues in 3-D systems integration such as synchronization, power delivery, and thermal effects.

Spintronic circuits have the potential to enhance CMOS in several dimensions, particularly as non-volatile memory and novel non-von Neumann structures. Several models and circuit topologies will be described and placed within a CMOS perspective.

The energy expended in server farms has become an issue of seminal significance. CMOS simply expends too much energy to scale the size and number of these farms to satisfy expected needs. An ultra-low energy technology is needed. One possible technology is superconductive single flux quantum circuits. This technology will be briefly reviewed, and novel design methodologies, algorithms, and circuit structures will be described to support the development of large scale Josephson junction based integrated systems.

About the Speaker: Eby G. Friedman received the B.S. degree from Lafayette College in 1979, and the M.S. and Ph.D. degrees from the University of California, Irvine, in 1981 and 1989, respectively, all in electrical engineering.

From 1979 to 1991, he was with Hughes Aircraft Company, rising to the position of manager of the Signal Processing Design and Test Department, responsible for the design and test of high performance digital and analog integrated circuits. He has been with the Department of Electrical and Computer Engineering at the University of Rochester since 1991, where he is a Distinguished Professor, and the Director of the High Performance VLSI/IC Design and Analysis Laboratory. He is also a Visiting Professor at the Technion - Israel Institute of Technology. His current research and teaching interests are in high performance synchronous digital and mixed- signal microelectronic design and analysis with application to high speed portable processors, low power wireless communications, and power efficient server farms.

He is the author of more than 500 papers and book chapters, 16 patents, and the author or editor of 18 books in the fields of high speed and low power CMOS design techniques, 3-D design methodologies, high speed interconnect, and the theory and application of synchronous clock and power distribution networks. Dr. Friedman is the Editor-in-Chief of the Microelectronics Journal, a Member of the editorial boards of the Journal of Low Power Electronics and Journal of Low Power Electronics and Applications, and a Member of the technical program committee of numerous conferences. He previously was the Editor-in-Chief and Chair of the Steering Committee of the IEEE Transactions on Very Large Scale Integration (VLSI) Systems, the Regional Editor of the Journal of Circuits, Systems and Computers, a Member of the editorial board of the Proceedings of the IEEE, IEEE Transactions on Circuits and Systems II: Analog and Digital Signal Processing, IEEE Journal on Emerging and Selected Topics in Circuits and Systems, Analog Integrated Circuits and Signal Processing, and Journal of Signal Processing Systems, a Member of the Circuits and Systems (CAS) Society Board of Governors, Program and Technical chair of several IEEE conferences, and a recipient of the IEEE Circuits and Systems Charles A. Desoer Technical Achievement Award and Mac Van Valkenburg Award, a University of Rochester Graduate Teaching Award, and a College of Engineering Teaching Excellence Award. Dr. Friedman is an Honorary Chair Professor at National Sun Yat-sen University, a Senior Fulbright Fellow, and an IEEE Fellow.

Lyapunov approach to the stability of feedback interconnections of linear and nonlinear negative imaginary systems

Speaker: Ian R. Petersen, Australian National University, Australia

Time: 1:00 pm - 2:00 pm, Dec 13, 2018

Location: 6MTC, RH304, Brooklyn, NY

Abstract: Negative imaginary systems theory allows for the design of robust controllers for flexible structures with force actuators and position sensors. The main stability result in this theory shows that the positive feedback interconnection of a negative imaginary system and a strictly negative imaginary system will be stable provided a certain DC gain condition is satisfied. We present a new Lyapunov proof of this result and then use this to extend the result into the realm of nonlinear systems, introducing nonlinear versions of the negative imaginary and strictly negative imaginary properties.

About the Speaker: Ian R. Petersen was born in Victoria, Australia. He received a Ph.D in Electrical Engineering in 1984 from the University of Rochester. From 1983 to 1985 he was a Postdoctoral Fellow at the Australian National University. From 1985 until 2016 he was with UNSW Canberra where was most recently a Scientia Professor and an Australian Research Council Laureate Fellow in the School of Engineering and Information Technology. He has previously been ARC Executive Director for Mathematics Information and Communications, Acting Deputy Vice-Chancellor Research for UNSW and an Australian Federation Fellow. From 2017 he has been a Professor at the Australian National University. He is currently the Director of the Research School of Engineering at the Australian National University. He has served as an Associate Editor for the IEEE Transactions on Automatic Control, Systems and Control Letters, Automatica, IEEE Transactions on Control Systems Technology and SIAM Journal on Control and Optimization. Currently he is an Editor for Automatica. He is a fellow of IFAC, the IEEE and the Australian Academy of Science. His main research interests are in robust control theory, quantum control theory and stochastic control theory.