NYU Tandon research to advance environmental sound classification wins IEEE Best Paper Award

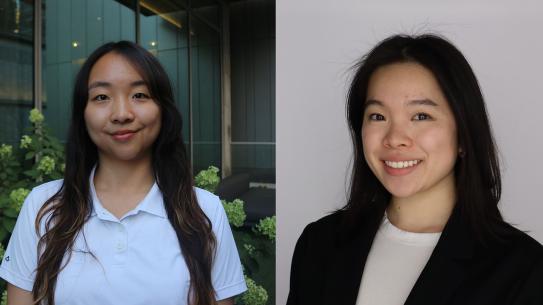

Justin Salamon, former senior research scientist at the NYU Tandon's Center for Urban Science and Progress (CUSP) and the Music and Audio Research Lab (MARL) at NYU Steinhardt, co-wrote the award-winning paper.

BROOKLYN, New York, January 13, 2021 – The paper “Deep Convolutional Neural Networks and Data Augmentation for Environmental Sound Classification,” has won the 2020 Institute of Electrical and Electronics Engineers (IEEE) Signal Processing Society (SPS) Signal Processing Letters Best Paper Award. The article, by Justin Salamon, who at the time it was published was a senior research scientist at the NYU Tandon School of Engineering’s Center for Urban Science and Progress (CUSP) and the Music and Audio Research Lab (MARL) at NYU Steinhardt, and Juan Pablo Bello, the director of both CUSP and MARL, appeared in the March 2017 issue of the journal. It was honored for its “exceptional merit and broad interest on a subject related to the Society's technical scope.” (To be eligible for consideration, an article must have appeared in Signal Processing Letters within a five-year window.)

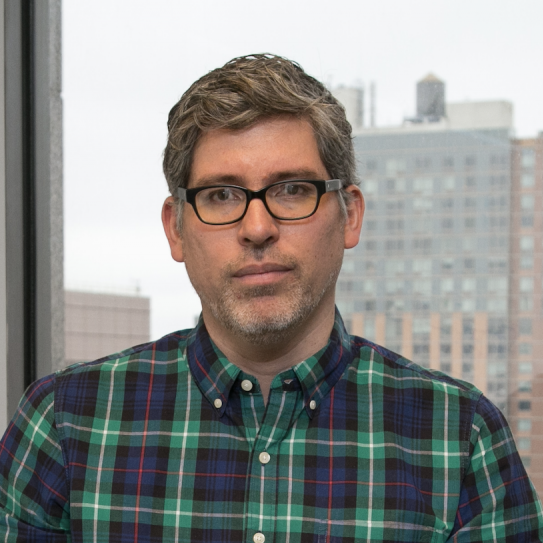

In 2015, Bello — who is also a Professor of Computer Science & Engineering and Electrical & Computer Engineering at NYU Tandon, as well as a Steinhardt Professor of Music Technology — had launched SONYC (Sounds of New York City), a project aimed at developing new technologies for the monitoring, analysis, and mitigation of urban noise pollution. The project’s convergence research agenda spans fields such as sensor networks, acoustics, citizen and data science, and machine learning.

As described in their award-winning paper, Bello and Salamon proposed the idea of employing deep convolutional neural networks (CNNs) – a class of neural networks originally developed for computer vision tasks – to learn discriminative spectro-temporal patterns in environmental sounds. They were among the first researchers ever to explore this application, and they found that although the high-capacity models were well suited to the task, the relative scarcity of labeled data impeded the process.

They solved that issue by augmenting the data they did have. Salamon, now a research scientist and member of the Audio Research Group at Adobe, explained, “Visual data is often augmented. If you’re training a machine learning model to recognize dogs, a single picture of a dog can be flipped, rotated, or cropped, but it’s still a picture of a dog. In the same way, the sound of a siren, for example, can be made softer or louder, compressed, or varied in pitch, but it’s still a siren.”

Augmenting their data greatly increased the size of their dataset, allowing them to fully harness the power of their proposed CNN and resulting in state-of-the-art classification results.

That multipronged approach has since impacted sound classification in a variety of research areas, from tracking migration patterns in birds to measuring the effectiveness of home smoke alarms. “Researchers are even working on flagging possible COVID-19 cases by analyzing coughing sounds,” Salamon said.

The best-paper award will be presented at the IEEE Conference on Acoustics, Speech, & Signal Processing (ICASSP), which is scheduled to be held in Toronto on June 6-11, 2021.