NYU Tandon Explores Our Future Reality

Students Showcase Their Augmented and Virtual Reality Projects at NYC Media Lab Event

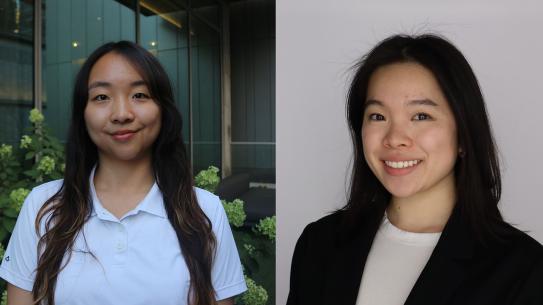

Integrated Digital Media grad students Vhalerie Lee and Subigya Basnet showcased their Game of Thrones-inspired AR narrative experience.

The NYC Media Lab’s third annual Exploring Future Reality event, held recently at NYU’s Kimmel Center, was jam-packed with discussions from leading executives and faculty researchers, innovative demos, hands-on workshops, and startup pitches. The Media Lab is a wide-ranging public-private initiative that counts as members the city’s leading media, technology and telecom companies, as well as faculty and students from several universities, including NYU.

The day began with a discussion among thought leaders on the current state of the burgeoning augmented and virtual reality (AR/VR) field, with Tandon represented on the panel by Professor Yong Liu, who was named earlier this year as a fellow of the Institute of Electrical and Electronics Engineers (IEEE). Liu, also a faculty member at the Center for Advanced Technology in Telecommunications (CATT) and NYU WIRELESS, detailed the network-level bandwidth challenges necessary to meet before AR/VR experiences can be widely accessed by all.

As the rest of the event showed, once those technical hurdles are overcome, users can expect a world of possibility to open up.

During the next portion of the day, Tandon students and faculty demonstrated a number of projects that showcased AR/VR’s potential to impact the worlds of entertainment, medicine, politics, office work, and more, including:

- A Game of Thrones-inspired AR narrative experience was created by Integrated Digital Media grad students Subigya Basnet and Vhalerie Lee. Their mobile app brings to life the popular stories and settings of Westeros — users simply hold up their phone’s camera to a physical map to navigate 3D castles — and could one day be used to re-imagine other televisions shows, movies, or texts, making them more compelling and immersive.

- Assistant Professor of Civil and Urban Engineering Semiha Ergan and her students are exploring the interrelations between neuroscience and built environment by using body sensors to quantify the impact of human performance given various architectural features. Since 90% of time in a typical workday is spent indoors, and architectural features unquestionably impact the productivity, health and comfort of a building’s occupants, their work is of great value to architects, human-resources professionals, and others.

- Relevant Motion combines VR and motion-capture technology to create a cost-effective, user-friendly method of providing physical therapy for stroke rehabilitation and other functions. The project’s large, multidisciplinary team includes NYU instructors Todd Bryant and Kat Sullivan, Tandon students Spencer Cappiello and Najma Dawood-McCarthy, ITP alum Aaron Montoya-Moraga, and NYU Ability Project manager Claire Kearney-Volpe. Using inexpensive and easily obtained hardware along with a state-of-the-art VR platform, the Relevant Motion system “gamifies” the prescribed exercises and allows medical practitioners to evaluate and track client progress easily.

- IDM grad student Mateo Hernandez Almeyda has produced a VR film that puts users in the midst of a violent street protest in Venezuela, watching helplessly as police lob grenades into the crowd. While fully aware that they are engaged in an onscreen VR experience, many users flinch in real life, so unsettling are the sounds of gunshots and sense of panic in the crowd. Those who have used VR platforms before sometimes try to escape the grenades and chaos by directing their avatar to run, Hernandez Almeyda explains, but in the end of The Reality of Reality, as his project is titled, each user dies in an explosion of red that ultimately fades to black — an expression of the hopelessness of living under an oppressive regime.

Later in the day, attendees could move over to the Leslie eLab, where developers pitched their projects to a panel of entrepreneurial judges. Among the pitches was one for 2 Seconds to Safety, created by Tandon lecturer Mark Skwarek and alum Yao Chen, who demonstrated their mobile point-to-point navigation app, which merges AR with a smartphone camera to guide users through various locales — particularly crucial when such deadly events as a fire or mass shooting are taking place. The system’s integrated sensors first detect the location of the threat, the space is 3D-mapped for location tracking, and users are guided with AR visual arrows to the safest location, where they can also signal to first responders.

For those inspired to create AR/VR projects of their own, the day also included a series of workshops, including one led by Tandon graduate candidate Lillian Warner, who is active in the NYU chapter of Design for America (DFA) and who focused on creating user flows for mixed reality feedback — a challenging task because there are many user input options and features in any mixed reality experience, and designers must consider time, physical space, end goal, and other complex factors.

Tandon student Cindy Li took advantage of Warner’s workshop and spent time during the day taking in the demos and networking with other attendees. “There is so much going on in AR/VR right now,” she says. “And much of it is happening right at Tandon.”