Hiring and AI: let job candidates know why they were rejected

Artificial-intelligence tools are seeing ever broader use in hiring. But this practice is also hotly criticized because we rarely understand how these tools select candidates, and whether the candidates they select are, in fact, better qualified than those who are rejected.

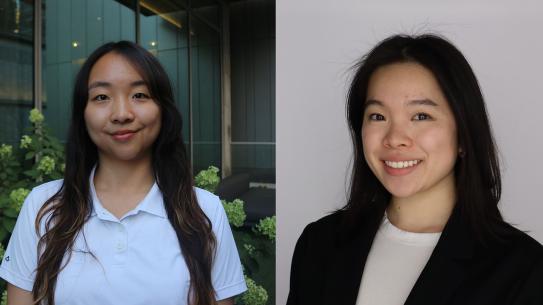

Julia Stoyanovich (CSE), member of NYU’s Center for Data Science, director at NYU’s Center for Responsible AI, and author of this article, proposes the idea of a two-tiered label system. Stoyanovich explains that this system would allow for “job candidates to see a list of the hiring criteria… so that they know precisely what a company is looking for. If the applicant is rejected, the AI will present them with another list, showing where they didn’t meet the criteria or compared unfavorably to other applicants — the reasoning behind the decision," allowing for more transparency between employers and candidates.

Stoyanovich points out that many times AI makes judgment calls that are opaque, and that employers often don’t know what data AI screeners are using, or how they analyze that data to make a final decision. "The labels can show managers the factors that the AI is using to screen applicants — and let those managers decide if those factors need to be changed."

She argues that employers can use AI effectively for parts of the hiring process — to identify clear requirements-based matches. "But AI tools cannot exercise discretion or apply subjective judgment. My hope is that nutritional labels will help us come to a consensus on which decisions we should leave to an AI, and which we should make ourselves.”