AI & Local News newsletter, issue 8

Hello, Welcome to the eighth issue of the AI & Local News newsletter!

? Join us for the AI & Local News Challenge Demo Day on May 19 at 12:30pm ET! Explore the five projects of the AI & Local News Challenge teams and learn about their applications in artificial intelligence, automation, story pipelines, curation, social media and misinformation. Our Challenge teams will be joined by a panel of local news experts for feedback. Learn more below and RSVP.

? There’s still time to join in The Associated Press’ AI Readiness Workshops. They’ve put together a great lineup of presenters and educational resources, so take a look.

Matt MacVey

Community & Project Lead, AI & Local News

NYC Media Lab

Matt@NYCMediaLab.org

PS If this email was forwarded to you, you can easily sign up here. Take a look at our newsletter archive to learn more.

Partner News

Associated Press: “AP has a free, online course to help newsrooms understand and implement new tools and technology that include automation and AI. The final session is on What’s Next for AI in Journalism and takes place on Wednesday, May 18 at 1 p.m. ET. Jim Kennedy will chat with Francesco Marconi, CEO of Applied XL, who was previously an instrumental part of AP's adoption of AI. Northwestern University professor Nick Diakopoulos will explain where AI is headed and the possibilities and responsibilities for journalism. The session will close with a breakout room where you will share project ideas or get help to find a project to pitch to your boss or to AP for its yearlong consultancy.

Live sessions are recorded and are part of a suite of tutorials, resources and discussions on technology and tools that address technology that could be used across the news-value chain: newsgathering, production, distribution and business-side. Next week’s tutorials include CRMs, ad tech automations, subscriber and donor propensity models and chatbots.

The course is based on a recent report from almost 200 U.S. local newsroom “scorecards” that identified AI readiness. Also included in the report are profiles of five local news leaders and technology gaps they’d like to address in their newsroom.

Read the report and sign up for the course.”

Brown Institute’s Local News Lab: “We are just about finished with testing of our recommendation system on The Philadelphia Inquirer’s article pages and we’re looking forward to analyzing and reporting the results soon. We’ve been delighted to work with such engaged partners and have received some positive feedback that tells us we’re on the right track:

‘We want to thank you again for all the work you and your colleagues have put into this project and all the assistance you provided through the A/B tests. We’re very excited that Trib editors and reporters will no longer have to spend so much time manually picking story recommendations. Should be a huge productivity gain for our entire editorial operation’- Dan Simmons-Ritchie (Engineer, The Texas Tribune)

We are also excited to welcome Duy Nguyen to the team as our new machine learning engineer. We also bid a very sad but grateful farewell to our fearless project and engineering lead, Ting Zhang, who has been with the lab from the start. So much of what we’ve accomplished and how we work is due to their efforts - we wish Ting the best in their next chapter!”

NYC Media Lab: “In our AI & Local News Challenge, five dynamic teams built AI applications to support quality information for local news audiences and sustainable news outlets.

Demo Day (Thurs May 19, 12:30pm ET) is our virtual event where each team will present their work and receive feedback from a panel of local news innovation experts.Join us to explore artificial intelligence and automation for journalism, including applications for story pipelines, social media, curation and misinformation.

Our Teams come from backgrounds in news organizations, startups and university research and development.

-

Information Tracer Information Tracer is a real-time, cross-platform information gathering system that contextualizes and quantifies information spread.

-

Localizer At Gannett, news automation is the fuel that helps achieve greater relevance by delivering hyperlocal information at scale to drive digital subscription growth. Localizer uses news automation techniques, such as Natural Language Generation, to parse data into local stories.

-

Overtone Overtone has built a Natural Language Processing (NLP) tool that finds and sorts online content by quality.

-

SimPPL SimPPL (read: sim-people) is a simulator of user activity on social networks. It tracks engagement with news stories, develops a model conditioned on individual attitudes toward news-sharing, and measures user behavior to guide content generation and digital distribution.

-

Social Fabric for Publishers Social Fabric for Publishers helps publishers bring the social conversation directly to consumers, avoiding social media sites like Twitter. The team’s technology makes it scalable to share topical conversations across a publisher’s article pages, giving media outlets new ways to drive engagement in addition to personalization features that tap into users’ social graphs.

Partnership on AI: “On April 27, Claire Leibowicz, Head of AI and Media Integrity at PAI joined the JournalismAI project at LSE for their Community Workshop, “How to Design Guidelines for the Responsible Use of AI in your News Organization,” alongside Uli Köppen, Head of the AI + Automation Lab at Bayerischer Rundfunk in Germany. Check out the recording of the conversation here, and keep your eyes out for a written guide related to these themes coming in the next few months.”

News

All you need to know about predictive journalism

Nicholas Diakopoulos delves into predictive journalism, a genre of data journalism used to generate evidence-based forecasts.

Diakopoulos interviewed some of the genre’s key players and analyzed the contents of dozens of published examples. The idea is that the journalistic practice of prediction could describe and synthesize several issues, including elections, COVID, sports and more.

The central part of this genre of journalism is the use of computational modeling techniques such as machine learning and simulation. But the research also shows the limits of predictive journalism in terms of ethics and the importance of developing rigorous editing protocols for sourcing and publishing predictions.

AI Colonialism

The MIT technology review launched a new series, “AI Colonialism,” to investigate how AI could exacerbate global inequities. Could AI mimic and then “update” patterns of colonial history by empowering the rich and exploiting the poor? This new world order would introduce novel and insidious means to increase inequality and human rights risks.

The four parts to the series thus far focus on:

-

South Africa’s private surveillance machine fueling a digital apartheid

-

How the AI industry is exploiting cheap labor in crisis-stricken Venezuela

-

A new vision for the purpose of AI in New Zealand’s Maori community

Time for Europe to get serious on Artificial Intelligence

While the EU has openly stated it aims to become a global AI standard-setter, a new report from the European Parliament’s Special Committee on Artificial Intelligence in a Digital Age (AIDA) finds that Europe is falling woefully behind in its ambitions. While over 60 countries, including the US, have developed strategic AI plans, this remains elusive for the EU - and it is no surprise that only eight of today’s top 200 digital companies are domiciled in the EU. The Committee urges the EU to develop a bloc-wide strategy at the earliest to finally move into a leadership role.

Events

Collaborative Journalism Summit

Date: May 19, Chicago

The 2022 Collaborative Journalism Summit will be held in person in Chicago. Sponsored by Montclair State University and Columbia College Chicago, it promises two days of talks, workshops about collaborative journalism.

Computation+Journalism Conference

Date: June 9-10-11, New York and online

The C+J Conference will be held June 9, 10 and 11 both in person at Columbia University and online.

SRCCON

Date: June 22-24, online

The participant-led conference from OpenNews for journalists who want to transform their work, their organizations, and their communities.

Research Briefs

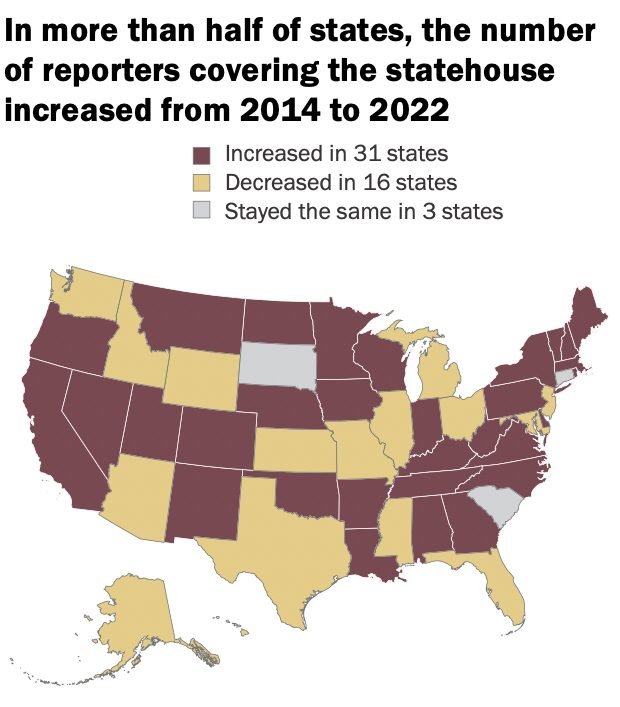

Nonprofit news outlets are playing a growing role in statehouse coverage

A new Pew research study reveals that nonprofit news organizations are playing an increasingly significant role in covering state capitols. Nonprofit news orgs have recently grown in scale, with a reporter base which has nearly quadrupled in numbers since 2014. According to Pew, there are now 80 nonprofit newsrooms covering US statehouses. These newsrooms fill a gap in high-quality, statewide news.

The University of Michigan’s Ford School of Public Policy has assessed the implications of widespread use of large language models (LLMs), algorithms which can recognize, summarize, translate, predict and generate human language based on large textual datasets. While this technology holds great promise for sectors like public policy and scientific research, this report highlights three major risks posed by LLMs: inherent bias due to the nature of data used to train the technology; energy use and environmental implications of physical LLM processing; and the risk of further social fragmentation with mainstream usage.

Ford School of Public Policy, University of Michigan

Call for Proposals

JournalismAI Academy for Small Newsroom

Deadline: June 8 2022, 11:59 PM GMT

The JournalismAI Academy for Small Newsrooms is a FREE 8-week online program starting in September that offers a deep dive into the potential of artificial intelligence to journalists and media professionals from small newsrooms. It is designed for journalists and media professionals from small organizations (fewer than 50 employees). This Academy will consist of two cohorts: Americas (from GMT -8 to GMT -3); and Asia-Pacific region (from GMT +5 to GMT +12).

Podcasts

AI Stories

The AI stories podcast brings together some of the best data scientists, machine learning engineers, business leaders and researchers at the forefront of this technological revolution. The podcast — hosted by Neil Leiser — explores several issues that the industry is facing, including AI in healthcare, natural language generation, and social networks.

Food for Thought

A.I. Is Mastering Language. Should We Trust What It Says? A flawless longform article about OpenAI’s GPT-3 and other neural nets that can now write original prose with mind-boggling fluency from Steven Johnson in The New York Times Magazine. The piece speaks to not only the status quo, but implications for the future.

AI Sommelier Generates Wine Reviews without Ever Opening a Bottle A group of researchers have developed a new algorithm that can write reviews of beer and wine indistinguishable from something written by a human. But AI still lacks tastebuds, and can’t generate original reviews. The researchers are keen to test the capacity of the algorithm to predict what an unreviewed wine might taste like, and compare it to a human’s critique.

Google Says AI Generated Content Is Against Guidelines Google’s Search Advocate says auto-generated content using AI writing tools is against Google’s Webmaster Guidelines. The topic was discussed during a Google Search Central SEO office-hours hangout in response to a question about GPT-3 AI writing tools. However, this kind of content may not be detected without the assistance of human reviewers.

What we are reading

The Internet Is Not What You Think It Is: A History, a Philosophy, a Warning Professor of history and philosophy of science Justin Smith has published “The Internet Is Not What You Think It Is,” a history of the internet from the ancient to today’s world and its utopian origins. Smith argues that while the potential of this technology continues to grow and shape human life in unprecedented ways, the utopian ideal that drove the internet’s development has been struck out by the nefarious impacts of social media and the global information economy. Smith also draws attention to the connections between artificial intelligence, the internet, human history but also the functioning of the natural world.

Quote of the month

“When people think about AI, they often think about ‘The Terminator’ or other Hollywood portrayals of it. These descriptions are extremely fun to talk about, but they are not real. AI is math. It is really beautiful, cool, complicated math, but it is not ‘The Terminator.’”

-

Meredith Broussard, associate professor at the Arthur L. Carter Journalism Institute of NYU, Read the entire interview here

The AI and Local News partners are Associated Press, The Brown Institute’s Local News Lab, NYC Media Lab and Partnership on AI.

The initiative is funded by Knight Foundation.

Newsletter produced by Angelo Paura