A.I. hiring software faces a regulatory reckoning

In early November, New York's city council passed a bill that would require companies selling A.I.-powered hiring software to conduct third-party audits of their technology to ensure it doesn’t discriminate against women and people of color, among other problems involving bias.

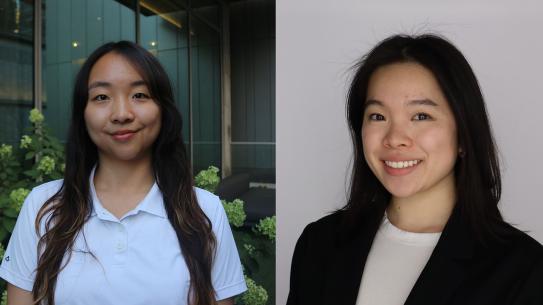

Julia Stoyanovich, an associate professor of computer science at New York University and a founding director of the school’s Center for Responsible AI, tells Fortune that the bill is a “big deal” because it represents the first attempt by any U.S. government to create regulations over “automated decision systems and hiring.” “There is a really tremendous need to be regulating the use of these tools, because whether you know it or not, essentially anybody who is on the job market is going to be screened by these tools at some point,” she says.

Currently, little is known about how widely used A.I. hiring tools are. “At least in New York City, it will give us that information,” Stoyanovich says.